Generate summary with AI

Imagine it being 3 PM on a Tuesday, and you’ve spent the entire day trying to figure out why your ticketing system won’t stop exploding with complaints about slow application performance. Everything’s green on the monitoring dashboard; the routers are online, switches responding, bandwidth utilization at 60%. Yet users across three offices can barely load the CRM system. It’s hours spent checking individual devices, running traceroutes, and analyzing logs before discovering that a gradual increase in packet loss on a WAN link has been degrading performance for the past week. Your monitoring tool never alerted you because technically, everything was “up.”

This scenario plays out daily in IT departments relying on basic network monitoring tools that only track device availability. But implementing effective observability and advanced monitoring reduces unplanned downtime and outage costs, according to IDC.

Here’s everything you need to know about network performance management, from its core features to implementing it in your organization.

» Make sure you know why you need network monitoring software

The limitations of basic network monitoring

One of the main challenges IT teams face is that basic network monitoring tells you when something breaks, but not why it’s breaking, when it might be on the verge of breaking, and how to prevent it from happening again. For example, a router shows “online” in your dashboard while users complain about unbearably slow cloud application performance. Your monitoring tool confirms everything is “up,” but your business is effectively down.

This is because traditional network monitoring operates like a simple health check where it pings devices to confirm they’re powered on and connected. When a switch goes offline, you get an alert, or when a router reboots, you see the event in your logs. This is fine for detecting catastrophic failures, but that’s it. Modern business networks need more sophisticated visibility.

This gap between device status and actual network performance costs organizations time, money, and user trust.

“A survey of 261 e-commerce and technology leaders reveals the majority of companies are losing millions of dollars every year to Internet disruptions.”

What affects network performance

Network performance doesn’t exist in isolation. Multiple internal and external factors continuously influence how data flows through your infrastructure:

- Bandwidth versus throughput gaps frequently confuse troubleshooting efforts. A 1Gbps link should theoretically support 1,000 Mbps throughput, but real-world performance rarely reaches this ceiling.

- Congestion occurs when traffic demand exceeds available capacity at any network point. Unlike complete link saturation, congestion often manifests as sporadic slowdowns during peak usage periods, such as morning traffic spikes when employees log in.

- Device density impacts wireless networks and cellular connections more severely than wired infrastructure. 50 users connecting to a single wireless access point share available airtime and compete for channel access.

- QoS misconfigurations cause inappropriate traffic prioritization or no prioritization at all. Voice and video traffic might compete equally with file downloads and backup operations, resulting in choppy calls and frozen video feeds.

- Environmental factors including power fluctuations, cooling issues, and physical interference affect network equipment performance. Overheating switches might begin dropping packets intermittently, or electrical interference could introduce errors on copper connections.

- Cloud latency and routing inefficiencies impact hybrid architectures where applications span multiple locations. Traffic between your office and a cloud-hosted database might traverse suboptimal paths through multiple internet exchange points, or your ISP’s routing to a specific cloud region might introduce unnecessary latency.

» Here’s how cloud innovation enhances IT management

What’s at stake without a proper solution

The business impact of inadequate network visibility extends far beyond IT frustration. Without an effective solution, you face the following costly challenges:

- Reactive firefighting instead of proactive management: IT teams spend their time investigating user complaints and troubleshooting mysterious slowdowns rather than optimizing infrastructure before problems emerge. Reactive monitoring without proactive monitoring can lead to significant downtime, rushed troubleshooting, and higher remediation costs, according to Optimum.

- SLA violations and user dissatisfaction: When you can’t measure actual network performance, you can’t maintain service level agreements or demonstrate compliance. The threshold is small, such as allowing less than 9 hours of total downtime per year for claiming “99.9% uptime”, where failure leads to financial penalties, reputational damage, and legal action, according to Motadata. Users lose confidence in IT’s ability to support business operations, and in MSP environments, clients question the value of your services.

- Troubleshooting consumes disproportionate resources: Without visibility into bandwidth utilization patterns, traffic flows, and capacity trends, IT teams either overprovision expensive network resources “just to be safe” or underprovision and suffer performance degradation. Neither serves the business well.

The challenge intensifies as organizations adopt hybrid architectures that blend on-premises infrastructure, multiple cloud platforms, remote workers, and mobile endpoints. Each network type introduces distinct performance management requirements:

- Enterprise LAN/WAN environments prioritize bandwidth utilization, quality of service (QoS) configuration, and device health monitoring. Congestion at a single aggregation switch or a misconfigured routing protocol can cascade across the entire internal network, affecting collaboration tools, file servers, and internal applications. These networks typically maintain relatively stable topology, but traffic patterns shift constantly based on business operations.

- Hybrid cloud and edge networks demand visibility across dynamic traffic paths where workloads move between AWS, Azure, local data centers, and edge locations. Latency between your office and a cloud-hosted ERP system depends on your ISP’s routing, internet exchange points, the cloud provider’s network, and load balancers distributing traffic. Without monitoring these paths end-to-end, you’re blind to where performance degrades.

- Mobile cellular networks and field operations operate under entirely different constraints. Unlike fixed infrastructure, mobile performance depends on constantly shifting signal strength, tower handoffs, device density in a given cell, and fluctuating backhaul congestion caused by radio frequency interference or even physical obstacles. A warehouse using mobile devices for inventory management might experience perfect connectivity at 8 AM and severe latency spikes by 10 AM as more workers clock in and concurrent sessions overwhelm the local cell site.

Network performance management (NPM) operates on a fundamentally different principle than basic monitoring: instead of simply checking whether devices are online, NPM continuously measures how well your network performs its actual job of moving data between users, applications, and services.

This shift from binary status checks to comprehensive performance analysis gives IT teams the visibility needed to maintain, optimize, and plan network infrastructure strategically.

6 features of network performance management for better IT operations

Network performance data only creates value when translated into actionable outcomes. The NPM workflow converts raw metrics into business results through several components that work together to provide comprehensive network visibility and control:

1. Metrics collection

NPM captures real-time network data from every relevant source, such as routers, switches, firewalls, wireless access points, and cloud endpoints. The actual metrics include:

- Latency: Measures the time required for data to travel from source to destination. High latency directly impacts user experience for interactive applications, video conferencing, and real-time collaboration tools. Even modest latency increases (from 20ms to 50ms) can noticeably degrade application responsiveness and user satisfaction.

- Jitter: Tracks variation in latency over time. Consistent 50ms latency performs better for voice and video than fluctuating latency that varies between 20ms and 80ms, because applications can’t compensate for unpredictable variations. Jitter measurements help identify unstable links, congested paths, and network segments requiring QoS optimization.

- Packet loss: Indicates the percentage of data packets that fail to reach their destination, requiring retransmission. Even 1-2% packet loss significantly degrades TCP performance and causes noticeable quality issues for real-time applications. Sustained packet loss above 5% typically indicates serious infrastructure problems requiring immediate attention.

- Throughput: Measures actual data transfer rates achieved between endpoints, which often falls short of theoretical bandwidth capacity due to protocol overhead, congestion, and inefficient routing. Monitoring throughput alongside bandwidth utilization reveals whether you’re effectively using available capacity or whether bottlenecks limit performance.

- Device utilization: Tracks CPU, memory, and interface load across network infrastructure. A router showing 95% CPU utilization may forward packets correctly but it lacks headroom to handle traffic spikes or process additional features.

Unlike basic monitoring that polls devices periodically, NPM systems collect continuous telemetry using multiple protocols: SNMP for device statistics, NetFlow or sFlow for traffic analysis, and API integration with cloud services.

You should prioritize metrics based on their actual impact to business operations. Critical systems supporting revenue generation, customer service, or time-sensitive operations demand more aggressive monitoring of latency and throughput. Less-sensitive workloads can be observed with broader thresholds.

» Here are some common SNMP security vulnerabilities to watch out for

2. Analytics and correlation

The importance of this component means actually applying intelligence to raw metrics, using AI and machine learning to detect anomalies, predict congestion before it occurs, and correlate events across multiple network layers.

A spike in latency might coincide with increased bandwidth utilization on a specific link, unusual traffic patterns from a particular subnet, and CPU overload on an edge router. Analytics engines identify these relationships automatically rather than requiring manual investigation.

» Make sure you know the differences between simple automation and true autonomy

3. Configuration management

This tracks device settings and network topology to prevent misconfigurations that cause outages. The goal is to maintain a complete inventory of how your network is configured, including routing protocols, VLAN assignments, firewall rules, QoS policies, and access control lists.

When you identify performance problems, effective configuration management allows you to quickly compare current settings and known good baselines.

4. Capacity planning

Monitoring trends over weeks and months makes it easier to forecast future bandwidth, computation, and storage requirements. Instead of reacting to capacity exhaustion, IT teams can identify gradual growth patterns and provision resources before constraints impact users.

Organizations implementing systematic capacity planning typically reduce over-provisioning significantly, cutting infrastructure costs while maintaining performance headroom for business growth.

5. Alerting and reporting

A great NPM tool should provide you with real-time dashboards, automated notifications, and SLA compliance documentation. It should be able to distinguish between noise and actionable events, routing critical IT issues to the right people while filtering out transient fluctuations that self-resolve.

Reporting capabilities track performance against defined service levels, documenting historical trends and supporting business decisions about network investments.

6. Root cause analysis

This means identifying the underlying source of performance issues instead of just describing symptoms. When users report slow application performance, root cause analysis might reveal that the actual problem stems from a saturated internet connection, an overloaded database server, excessive latency to a cloud service, or packet loss on a WAN link.

This diagnostic capability dramatically reduces time spent troubleshooting and prevents teams from implementing ineffective fixes that address symptoms while ignoring causes.

How to implement NPM in your organization

Implementing NPM effectively requires strategic planning, careful tool selection, and ongoing refinement. Organizations that treat NPM deployment as a tactical project rather than a strategic initiative often struggle with incomplete visibility, alert fatigue, and limited ROI.

First lay the foundation

You can’t implement network performance management effectively unless your organization is prepared for it. Since it involves such a fundamental shift of how you manage your network, it’s not as simple as something you turn on and leave alone.

Here’s what you should consider first:

- Stakeholder alignment: You need to bring together network engineers, application owners, business leaders, and security teams to define shared objectives. Each group measures success differently. Engineers focus on infrastructure health, business leaders want SLA compliance, and application owners care about response times. Aligning these perspectives early prevents later conflicts about priorities and resource allocation.

- Process definition: This documents who responds to which alert types, how incidents escalate, and what remediation authority different teams possess. Everyone should know the process and understand their roles and responsibilities. Clear alert severity definitions, escalation paths, and remediation workflows prevent confusion when automated alerting begins.

- Staff training: Teams need to be able to interpret dashboards, analyze alerts, and integrate NPM insights into existing troubleshooting workflows. The goal is ensuring everyone understands has the right knowledge or IT certifications in the NPM-enhanced operational model.

Choose the right tool and NPM deployment model

Organizations can deploy NPM through three main approaches:

- On-premises solutions provide greater control and satisfy data sovereignty requirements but require dedicated infrastructure and specialized expertise. These work well for organizations with substantial data center investments and in-house networking teams.

- Cloud-based SaaS platforms deploy rapidly with minimal maintenance, scaling more easily across distributed locations. They suit hybrid architectures and organizations prioritizing agility, though they involve ongoing subscription costs.

- Hybrid architectures combine on-premises collection with cloud analytics, offering control over sensitive data while leveraging cloud scalability for processing and long-term storage.

Beyond deployment model, evaluate NPM tools on automation depth (from basic alerting to automated remediation), analytics sophistication (threshold-based versus baseline or anomaly-style analytics where supported), integration breadth (ticketing only versus full ITSM orchestration), and visibility scope (on-premises only versus cloud, SD-WAN, and mobile endpoints).

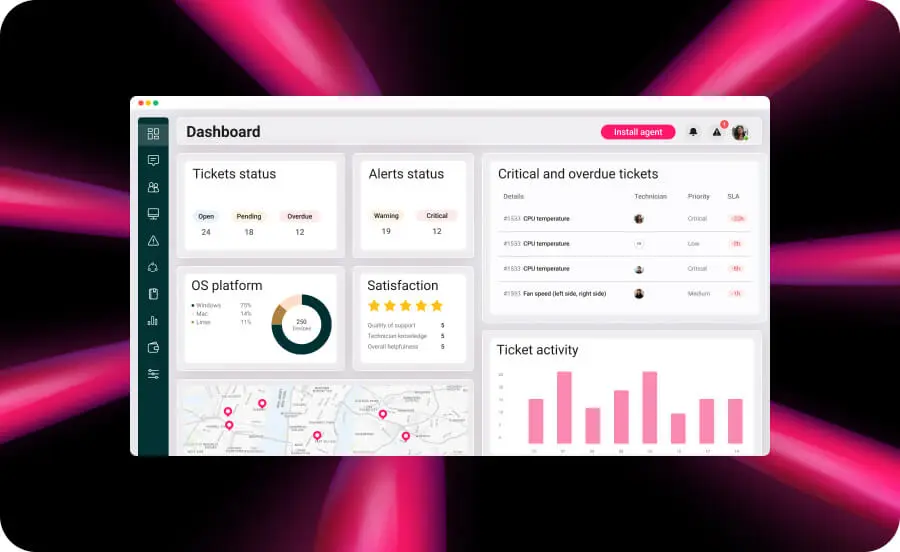

Atera’s integrated architecture connects network performance visibility directly to ticketing, remote management, and remediation within the same system. Network Discovery operates on scheduled scans (daily, weekly, or monthly) to identify new devices, unauthorized connections, and security vulnerabilities. Discovery findings can be routed into operational workflows to create and triage tickets, assign owners with full device context, and trigger appropriate responses. This eliminates data silos between network monitoring and other IT systems while reducing response time and context loss during escalation.

Atera provides NPM capabilities as part of a unified IT operations platform rather than a standalone point solution. Its cloud-based RMM tool supports monitoring for SNMP-capable network devices and alerting, alongside endpoint and server monitoring, and it offers Network Discovery tools as an add-on that uses Nmap-based scanning to detect devices and surface security context such as open ports and associated CVE exposure signals.

The platform’s integrated architecture connects network performance visibility directly to ticketing, remote management, and remediation within the same system. Network Discovery operates on scheduled scans (daily, weekly, or monthly) to identify new devices, unauthorized connections, and security vulnerabilities. Discovery findings can be routed into operational workflows to create and triage tickets, assign owners with full device context, and trigger appropriate responses. This eliminates data silos between network monitoring and other IT systems while reducing response time and context loss during escalation.

» Don’t miss our guide to using Nmap to find network blind spots

Maintain NPM effectiveness over time

Networks don’t stand still. New applications get deployed, user populations grow, cloud migrations shift traffic patterns, and business priorities evolve. What you once thought was normal performance in January looks dramatically different by June after onboarding a new division or migrating your ERP system to the cloud.

Without continuous refinement, NPM systems gradually lose relevance as the gap widens between what you’re monitoring and what actually matters to your organization. Effective long-term NPM strategies include:

- Quarterly reviews: Examine metric trends, alert accuracy, and business alignment to prevent NPM drift where monitoring becomes less relevant as infrastructure changes.

- Dynamic threshold adjustment: Ensure alerts remain meaningful as networks scale and traffic patterns shift. Atera’s customized alerts in threshold profiles allow IT teams to customize alerting parameters for monitored infrastructure, adapting monitoring sensitivity to match your evolving network conditions.

- Capacity planning cycles: Leverage trending data to forecast infrastructure requirements 6-12 months ahead, preventing both over-provisioning and under-provisioning.

- Post-incident analysis: Treat every significant performance issue as a learning opportunity, documenting findings in knowledge base articles.

Traditional NPM often requires ongoing manual work (keeping inventories current, tuning alerts, and translating findings into tickets and runbooks). Autonomous IT, when implemented safely, reduces this toil by automating data collection and routing, while keeping high-risk changes behind approvals and audit trails.

With Atera’s Network Discovery, technicians can schedule Nmap-based scans to identify devices on managed subnets, alert on newly detected devices, and review open ports with associated CVE exposure context from scan results. This helps reduce blind spots caused by stale inventories, especially in environments where devices appear and disappear or where coverage relies on manual documentation. The result is NPM that stays relevant as your network evolves, reducing the manual overhead of maintaining monitoring systems while improving accuracy and coverage.

This autonomous network discovery capability eliminates the monitoring gaps that plague traditional NPM implementations. New infrastructure gets visibility immediately rather than remaining invisible until someone remembers to add it manually. As networks evolve, technicians can adjust threshold profiles and alerting parameters based on observed traffic patterns and operational experience. AI Copilot can draft knowledge base articles from resolved tickets, streamlining documentation workflows; though these should be reviewed before publishing to ensure accuracy and avoid exposing sensitive data.

The result is NPM that stays relevant as your network evolves, reducing the manual overhead of maintaining monitoring systems while improving accuracy and coverage. IT teams shift from constantly updating configurations to defining strategic boundaries and operational goals.

» Not convinced? Here’s how Autonomous IT operations eliminate up to 90% of outages

Move from reactive monitoring to proactive performance management

Network performance management transforms IT operations from reactive firefighting into proactive optimization. By moving beyond basic device monitoring to comprehensive performance analysis, organizations gain visibility into latency, throughput, packet loss, and user experience across every segment of their infrastructure. As networks evolve with hybrid cloud architectures, remote workforces, and mobile endpoints, NPM strategies must adapt through distributed monitoring, context-aware alerting, and autonomous systems that learn and adjust independently rather than requiring constant manual reconfiguration.

Atera provides comprehensive IT management that extends beyond network monitoring to encompass the entire operational workflow in a single platform that combines RMM, ticketing, PSA, and network performance visibility. This enables teams to connect monitoring context directly to ticket handling and technician actions.

Defensible AI-powered capabilities provide assistive automation with appropriate controls, including role-based access, approvals for high-risk actions, and decision logging.

» Interested? Try Atera for free

Related Articles

What is network discovery?

Your network added 47 devices overnight and you have no idea what most of them are. Manual tracking can't keep pace with BYOD, shadow IT, and ephemeral workloads. Atera's Network Discovery delivers continuous automated visibility, catching rogue devices and leveraging real-time intelligence for better Autonomous IT systems.

Read nowNetwork Automation: strategies for scalable and resilient networks

Discover the hidden potential of network automation. Learn how it simplifies tasks, enhances security, and scales operations effortlessly, transforming the way you manage your network.

Read nowHow to choose the right network switch for your IT team

What are the best network switches for IT departments, and what should be considered when selecting one? This guide answers that and much more.

Read now30 network commands: How many do you know?

Network commands help accomplish complex IT work quickly. Because there are so many of them, here’s a cheat sheet with 30 of the most useful ones.

Read nowEndless IT possibilities

Boost your productivity with Atera’s intuitive, centralized all-in-one platform