Generate summary with AI

Multimodal AI, the next evolution of generative AI, is able to make use of data from many sources and in many formats. That ability serves enterprises well, as so much of a business’s data today exists in many formats, types, and locations. See how multimodal AI is in use already and what’s possible.

Key Takeaways

- Multimodal AI can intake, process, and combine multiple types and formats of data to create more comprehensive responses, content, and more

- Multimodal AI depends on a combination of advances in LLMs, transformer models and encoder/decoder frameworks to handle complex tasks

- Enterprises can use multimodal AI for a wide range of tasks, since it matches the broad range of data types that businesses are ingesting, storing, and using

- Applications from medical and research to IT and financial and self-driving cars continue to grow as multimodal AI models mature — though it’s still a ways off from widespread adoption

What is multimodal AI?

Multimodal AI can combine and analyze different forms of data, such as both text and voice, to gain a broader understanding of a topic. This is particularly useful for modern enterprises, as the rise of AI in enterprises is increasingly driven by the use of unstructured data like videos, photos, documents, and social media posts. Using multimodal AI can give users more accurate insights, identify correlations across domains and data types, and provide more context for advanced applications in fields like healthcare, security, IT, and as virtual assistants.

Multimodal AI differs from typical AI models in that it can handle multiple types of data at once, in varying formats including text, images, audio, video, and other input. Traditional AI models handle only a single type of data.

AI in general is a quickly evolving field, and recent advances in algorithm training are being applied to multimodal research. A Gartner prediction notes that 40% of generative AI solutions will be multimodal by 2027.

How multimodal AI works

Generative AI systems that are unimodal can generally process one type of input and then give output in that same type of data — OpenAI’s GPT-3, for example. With a multimodal AI system, it’s possible to input multiple types or modalities of data, such as both text and images. A multimodal AI system can then produce both text and images in response. This can alleviate some of the limitations of unimodal AI, such as its limited scope and sometimes lacking context interpretation. Multimodal AI delivers more contextually aware output. Consider, for example, a video vs. an image vs. a text description of the same event, all of which vary in quality and representation of the same thing.

The technology behind multimodal AI

Unimodal AI is built with a variety of algorithms and models, but multimodal AI takes those capabilities a step further. Multimodal AI consists of multiple unimodal neural networks, which make up the input module that’s capable of inputting multiple data types. There’s also a fusion module to combine, align, and process data from each modality, then an output model to deliver results.

Multimodal AI can identify patterns between different types of data inputs. To do so, multimodal AI models take advantage of large language models (LLMs), specifically deep neural networks, in addition to transformer models and encoder-decoder frameworks. That encoder-decoder architecture uses an attention mechanism to process data from each modality — a computer vision encoder for images, and natural language processing (NLP) encoder for text, and so on. Then, multimodal AI uses data fusion techniques to integrate the different modalities. Fusion techniques can be applied at different places within the multimodal AI model, depending on the model creator’s overall vision.

Transformer models are essential for multimodal AI, as they process sequential data efficiently. Their self-attention mechanisms mean multimodal models can understand long-range dependencies and adapt to different inputs. Finally, embedding models are used in multimodal AI to transform complex data into numerical vectors, called embeddings, that allow AI to understand relationships between data and thus classify and search that data. Along with vector databases, those embedding models are what allow multimodal AI models to capture and process all data equally in one place.

The result: multimodal AI systems can interpret more diverse information and learn from it to make accurate, human-like predictions. Outputs are more complex, matching the use cases and needs of human workers.

Real-world applications and use cases for multimodal AI

Multimodal AI brings a lot of possibilities for enterprises, though the technology is still in early days. Some of the ways that users are already exploring multimodal AI include:

- Delivering tailored, personalized makeup and skincare recommendations with the use of computer vision at Sephora to increase sales and customer satisfaction

- Automating IT workflows to speed up user resolution, with the ability to communicate through both text and voice

- Improving fraud detection in banking and finance with multimodal AI’s pattern recognition abilities

- Combining sensor data from cameras, radar, and lidar to improve self-driving car performance

- Automating insurance claims processing, pulling in photos and documents from multiple sources to reduce errors and speed up resolution

- Creating automated workflows for complex, multi-step tasks, such as in medical and scientific research or lengthy legal or financial processes, as the OpenAI O-Series is working on

- Generating images, such as with DALL-E 3’s Open AI-based model that creates images based on text prompts, or GPT-4V that can process both images and text to create visual content

Benefits of multimodal AI

Multimodal AI can work more like humans do, with insights, knowledge, and work tasks that reflect the variety of inputs in daily life. It’s more versatile than unimodal AI, with these benefits:

Accuracy

Multimodal AI brings in multiple data streams that make up a full picture of a topic or event, thus leading to better results that reflect that full picture.

Problem solving

Multimodal AI can also solve problems more effectively when it has all the potential data inputs, such as when diagnosing medical conditions.

Learning across domains

With access to multiple modalities, multimodal AI can also learn more deeply from those various types of data to be able to perform more tasks.

Recognizing patterns

With multiple data inputs, multimodal AI has more context for a question, problem, or workflow, so it’s able to recognize patterns across data and provide accurate, relevant outputs.

Improved automation

With more data and context available, multimodal AI can enhance tools like chatbots, virtual assistants, and AR for better user experiences.

Trends and what’s next for multimodal AI

Multimodal AI, like the entire field of artificial intelligence, is evolving fast. Multimodal AI can bring a lot of benefits, but as the Gartner stat noted, it likely won’t be above 40% even by 2027. That’s in part because of onerous data requirements and other challenges, along with the continuing need to ensure that AI is unbiased, accurate, and respecting data privacy.

Here’s what to look out for next:

Unified multi- and unimodal AI models

Some of the popular generative AI models out there today, like OpenAI’s GPT and Google’s Gemini, are already able to handle text, images, and other data types inside a single architecture, so these will likely keep maturing.

Real-time multimodal AI processing

In addition to multimodal considerations, enterprises are also working more and more in real time. So things like augmented reality, data processing for self-driving cars, financial use cases, and other decision-based functions, all require AI to process and use data in real time from multiple sources.

Multimodal data augmentation

As synthetic data becomes more widely used, such as for training datasets and improving model performance, multimodal AI can come into play to combine synthetic and real data from multiple formats and sources.

Cross-modal interaction

As researchers continue developing the key functionalities behind multimodal AI, like the attention mechanisms and transformers, the technology is more able to align and fuse data from various inputs. That results in clearer, contextually accurate outputs.

Assessing data requirements

As unimodal AI has shown already, generative artificial intelligence requires lots of data and energy to work effectively. Multimodal AI models require even more healthy, well-labeled data from a wider range of formats and types to be trained effectively and accurately.

Improving data fusion

Combining data is one of the primary roles of multimodal AI, and can bring challenges. Different kinds of data modalities aren’t always time-aligned, and data types can be so different from each other that there’s still much to do in making them work together.

Bringing multimodal AI into the future

Multimodal AI can ingest multiple data types and modalities, opening up the possibilities for more accurate, relevant, and comprehensive AI outputs. Multimodal AI continues to emerge and mature, bringing lots of potential to industries like finance, medicine, research, and IT.

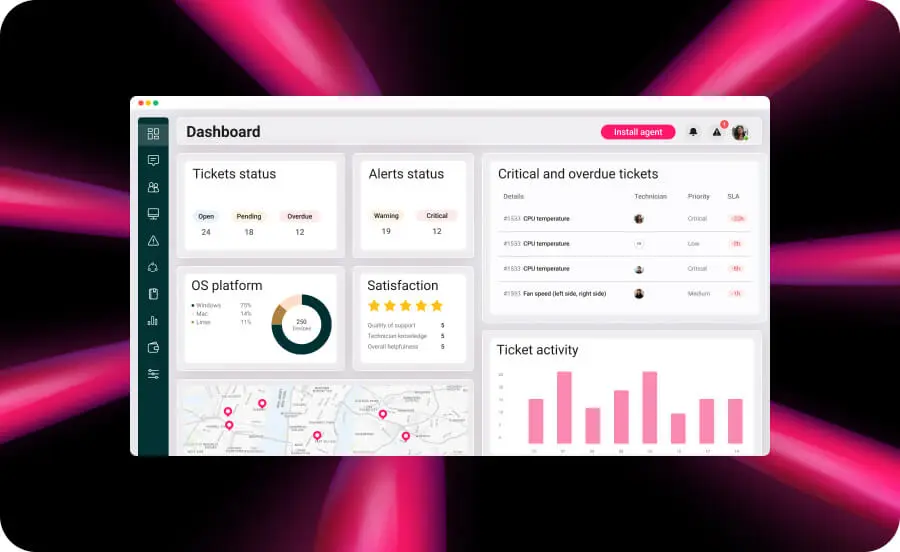

AI technology is already saving time and resources and helping end users solve their problems faster: Atera’s AI Copilot brings multimodal capabilities to users through its support of both voice and text inputs. AI Copilot, part of Atera’s product suite to automate and improve IT management, is an advanced assistant for IT technicians.

AI Copilot brings intelligent assistance for device management, ticket resolution, and alert management, all of which help busy IT technicians to work smarter and solve user issues faster. AI Copilot includes features like custom script creation, remote session summaries, command generation, natural language device search, ticket replies, knowledge base article generation, and real-time device troubleshooting.

Related Articles

The AI Startups Turning South Korea Into a Global Innovation Powerhouse

South Korea is rapidly emerging as one of the world’s most dynamic AI innovation hubs, fueled by strong government investment, advanced semiconductor manufacturing, and a growing startup ecosystem. From healthcare diagnostics and service robotics to generative AI and next-generation chips, a new wave of companies is shaping how artificial intelligence is applied across industries. This article highlights six South Korean AI companies gaining worldwide attention and the factors driving the country’s expanding role in the global AI economy.

Read nowThe Japanese AI Companies That Could Change Global Tech

Japan is rapidly emerging as a major hub for artificial intelligence innovation, with startups applying AI across manufacturing, healthcare, logistics, and enterprise automation. Companies like Preferred Networks, ABEJA, and ExaWizards are using machine learning and data intelligence to solve real-world business challenges. As labor shortages, robotics expertise, and government investment accelerate adoption, Japan’s AI sector offers valuable insights into the future of global technology. Here are eight Japanese AI companies gaining attention, along with some reasons business leaders should be watching them.

Read nowThe Chinese AI Surge That’s Redefining Global Competition

China’s AI sector has quickly evolved into one of the most influential forces in global innovation. With more than 5,300 companies operating across a wide range of industries, China is helping redefine what large-scale AI deployment looks like. These rising innovators are accelerating AI commercialization, compressing innovation cycles, and raising the competitive bar for businesses worldwide.

Read nowThe French AI Boom You Can’t Afford to Ignore in 2026

France has rapidly become one of Europe’s most influential AI growth markets, backed by major public investment and a surge in enterprise adoption. From generative AI and drug discovery to insurance automation and defense systems, French innovators are building globally competitive solutions across industries. Here’s a closer look at the companies helping position France at the center of the next wave of artificial intelligence.

Read nowEndless IT possibilities

Boost your productivity with Atera’s intuitive, centralized all-in-one platform