Generate summary with AI

Managing IT infrastructure across multiple locations means dealing with endless vulnerability alerts. Traditional scanning tools generate mountains of data but little actionable intelligence. Security teams spend their time chasing false positives that exceed 50% of all alerts. Meanwhile, unpatched vulnerabilities sit exposed for months.

This guide shows you how autonomous vulnerability management transforms this chaos into a manageable security system.

» Confused about vulnerability management basics? Explore our IT glossary

Traditional vulnerability management breaks at scale

Here’s the uncomfortable reality: your current vulnerability management approach worked fine when you had 200 endpoints. But at 2,000 endpoints across multiple locations? It’s drowning your team.

Let’s break down exactly why traditional approaches collapse and cause IT issues when you try to scale them.

Patch delays expose your infrastructure for months

Think about your last critical vulnerability. How long did it take from discovery to deployment? If you’re like most organizations, the answer is weeks or months; not the hours that attackers need to exploit it.

The 2024 numbers are sobering: 45% of critical vulnerabilities never get patched. Average remediation takes weeks for critical vulnerabilities, stretching to months for applications.

Why the delays? Because traditional patch management requires human review at every step. Someone has to assess the vulnerability, check for conflicts, schedule maintenance windows, test the patch, and coordinate deployment. Multiply this across thousands of endpoints and hundreds of applications, and you’ve created a bottleneck that can’t possibly keep up with the rate of new vulnerabilities.

For MSPs managing retail POS systems, these delays mean PCI compliance violations and breach liability.

Alert overload paralyzes decision making

Vulnerability scanners flag thousands of potential issues without context. Teams can’t distinguish theoretical CVEs in unused components from actively exploited production vulnerabilities.

Every morning, your team faces a dashboard filled with thousands of alerts. But here’s the problem: vulnerability scanners flag thousands of potential issues without context. Your team can’t distinguish between a theoretical CVE in an unused library and an actively exploited vulnerability in your production payment system.

The result is that some organizations receive over 10,000 daily alerts, with more than 50% being false positives and creating widespread alert fatigue. This creates widespread alert fatigue. 56% of IT professionals admitted to having ignored an alert based on past false-positive experiences. When everything looks like a crisis, nothing gets the attention it deserves.

Root causes behind vulnerability chaos

The breakdown isn’t random; it stems from two fundamental flaws in how traditional vulnerability management was designed. Understanding these root causes is critical because they explain why simply buying more tools or hiring more people won’t solve the problem.

Manual processes can’t handle modern scale

Traditional vulnerability management was designed for a simpler world. The process works like this: scan, review, prioritize, patch, verify. When you have 50 endpoints, a security analyst can handle this workflow. When your enterprise IT environment has 5,000 endpoints across multiple clients? The math doesn’t work.

Here’s the reality: each vulnerability requires human judgment. An analyst must review the CVE details, assess the asset’s criticality, check for business impact, coordinate with stakeholders, and manage exceptions. Even at 10 minutes per vulnerability (which is optimistic), a single day’s scan results can require weeks of human review.

MSPs face an even bigger challenge. They must multiply this effort across every client environment, creating individual remediation plans, tracking completion across different systems, and managing client-specific exceptions and maintenance windows. A single analyst might be responsible for vulnerability management across 20+ different client environments, each with unique configurations and requirements.

Tool fragmentation creates operational blindness

The second problem is architectural. Your security stack probably includes separate tools for vulnerability scanning, patch management, asset inventory, change management, and ticketing. Each tool has its own database, its own interface, and its own workflow.

This fragmentation creates three critical problems:

1. Context disappears at every handoff. Your vulnerability scanner identifies a critical SQL injection flaw, but it doesn’t know that the affected server was decommissioned last week. Your patch management tool knows a patch is available, but it doesn’t know that installing it will break the custom application that depends on the vulnerable component. Your ticketing system tracks the remediation ticket, but it can’t patch a fix automatically based on the vulnerability’s severity.

2. Delays compound at every integration point. Data must be exported from one system, transformed, and imported into another. Each handoff introduces delay and the possibility of error. A critical vulnerability discovered on Monday might not show up in your patch management queue until Wednesday, and the remediation ticket might not be created until Friday.

3. Nobody has complete visibility. Your vulnerability team knows about the CVEs. Your operations team knows about the systems. Your change management team knows about the maintenance windows. But nobody has a complete picture of risk, priority, and remediation status across the entire environment.

How autonomous vulnerability management transforms security operations

Autonomous vulnerability management represents a fundamental shift from reactive security firefighting to proactive, intelligent protection. Unlike traditional automated management that requires human-triggered scans, autonomous vulnerability management operates continuously, like having your smartest security analyst working 24/7 but without the coffee breaks or burnout.

Here’s what separates true autonomy from simple automation: autonomous systems don’t just follow scripts written by humans. They use machine learning to discover complex patterns in data, then apply those insights to make decisions and take action independently. Your role shifts from writing detailed rules to setting strategic goals and operational boundaries that help the autonomous system get better over time.

The AVM engine: Detection through resolution

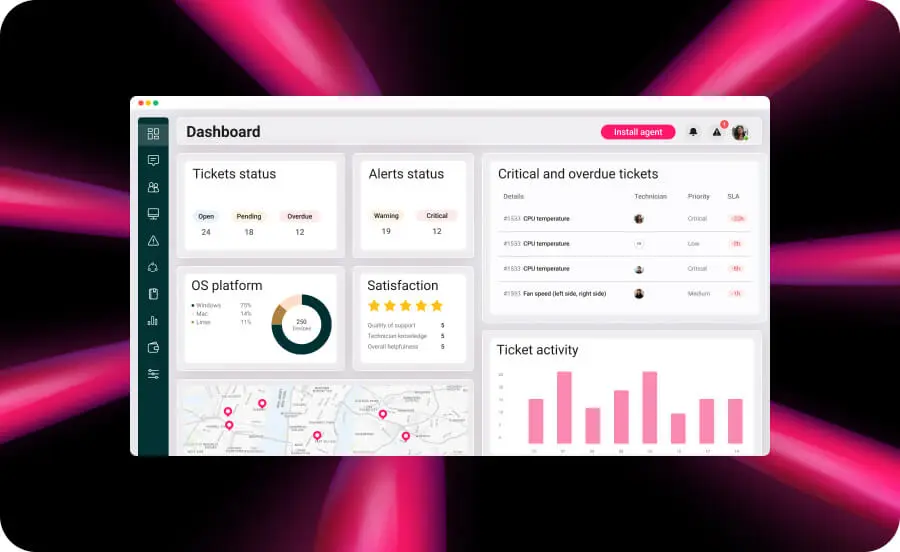

Autonomous discovery goes beyond asset scanning. Traditional tools follow predetermined rules to find devices. Atera’s RMM platform features integrated network discovery that learns what normal network behavior looks like across your environment, then identifies anomalies that might represent unauthorized devices or common vulnerabilities and lets you know with configurable alerts.

Intelligent threat detection learns from patterns. Understanding zero-day exploit mechanisms helps appreciate how AVM enhances traditional vulnerability management approaches. While signature-based tools match known CVE patterns, machine learning engines discover correlations between system behaviors, threat indicators, and exploitation patterns that no human-written rule could anticipate.

Context-aware prioritization adapts to your business. This is where autonomous systems show their intelligence. Atera’s RMM platform with asset and inventory scanning provides visibility into critical assets across your environment and simplifies patch management across your IT infrastructure, while AI Copilot assists technicians with generating custom scripts for managing security-related tasks.

» Learn more about how AI is leading the digital IT transformation

How to implement AVM in your environment

Before deploying AVM, complete three critical assessments: inventory all assets for complete coverage, verify integration capabilities with existing tools, and establish clear classification frameworks for automated decision-making:

- Complete your asset inventory. AVM protects only what it sees. Proper IT asset management and lifecycle management form the foundation, while network discovery capabilities help identify all assets automatically.

- Verify security stack integration. Confirm API availability for SIEM, SOAR, endpoint protection, and patch management platforms. Organizations using Atera benefit from unified RMM, PSA, and ticketing functions.

- Establish vulnerability classification frameworks. Define clear policies for classification, escalation, and remediation. Document these policies before automation begins. Following IT change management processes and patch management policies ensures smooth integration.

» Make sure you know the difference between RMM and PSA

Implementation roadmap

A realistic implementation follows five distinct stages, each building on the previous to ensure stable, sustainable deployment. This timeline allows for proper testing, team training, and gradual automation that builds confidence rather than creates chaos.

- Stage 1: Planning and assessment. Define success metrics like MTTR reduction, patch coverage percentage, and false positive rates, then establish policies and identify pilot environments.

- Stage 2: Tool configuration and integration. Deploy discovery agents, configure AI training, and integrate security tools.

- Stage 3: Process development and testing. Develop workflows, test in non-production, refine algorithms, and train staff.

- Stage 4: Pilot deployment. Launch with human approval, monitor AI decisions, gather feedback, and increase automation scope gradually.

- Stage 5: Production rollout. Expand to production with graduated automation, maintain oversight for critical systems, and measure against KPIs.

Making the move to autonomous vulnerability management

Autonomous Vulnerability Management isn’t coming. It’s here. While competitors struggle with spreadsheets and false positives, teams using Atera’s Autonomous IT platform are already slashing remediation times.

The transformation gives you proactive security management instead of perpetual firefighting. Atera’s Robin eliminates up to 40% of your IT workload while the RMM platform provides autonomous monitoring and discovery capabilities.

IT Operations Managers drowning in vulnerability backlogs and MSPs struggling to scale security services now have a clear path forward.

» Ready to stop playing catch-up with vulnerabilities? Start your free trial and see Autonomous IT in action

Related Articles

What is IT Management

IT downtime costs thousands of dollars per minute, yet most companies don't realize they have a management problem until systems fail. Without structured IT management, you face productivity hemorrhage, security breaches from unpatched vulnerabilities, and technical debt that consumes IT budgets. Technology should be a business enabler, not a constant crisis.

Read nowWhat is infrastructure monitoring?

The difference between proactive IT and midnight firefighting comes down to visibility: seeing CPU saturation before it crashes services, catching disk failures before they lose data, and detecting anomalies hours before they become disasters. Effective infrastructure monitoring is the path that gets you there.

Read nowCapEx vs. OpEx

Misclassifying IT spending as CapEx or OpEx inflates profits short-term but distorts financial reporting, tax liability, and strategic decisions. Bad enough, this could be extremely costly and time-consuming to fix. Getting it right determines whether your IT investments align with cash flow, growth trajectory, and compliance requirements.

Read nowHow to restart a remote computer using Windows

Remote Windows restarts fail when network connectivity drops, permissions aren't configured correctly, or firewall rules block critical services. This guide covers every method (from Command Prompt and PowerShell to enterprise tools like Intune and SCCM) plus backup strategies for when systems freeze or disconnect from the domain.

Read nowEndless IT possibilities

Boost your productivity with Atera’s intuitive, centralized all-in-one platform