Generate summary with AI

You know that sinking feeling when a user calls to report a server down, and you had no idea anything was wrong? The worst part isn’t the outage itself, but realizing your infrastructure was failing in slow motion for hours or days, and you just couldn’t see it happening. According to the Uptime Institute, a considerable number of outages are caused by human error and management failures. That means most of the fires we’re fighting could have been prevented if we’d just seen them coming.

Modern IT infrastructure has become impossible to manage by feel. Between on-premises servers, multi-cloud workloads, containers spinning up and down, and legacy systems that refuse to die, we’re all flying blind unless we have proper monitoring in place.

Here’s everything you need to know about what proper infrastructure monitoring looks like.

» Learn more about the costs of legacy IT

The infrastructure visibility crisis

Modern IT infrastructure has become a tangled web of complexity. Organizations now juggle on-premises servers, cloud workloads across AWS and Azure, containerized applications, virtual machines, network devices, and legacy systems all operating simultaneously. Yet despite this explosive growth in infrastructure components, most IT teams seem okay with essentially being blind.

The result is expensive, preventable chaos. Infrastructure failures aren’t rare edge cases. They’re a costly and predictable consequence of insufficient visibility, but that doesn’t have to be the case.

According to the Uptime Institute, around a third of all reported outages cost most than $250,000, but that 80% of respondents say that their most recent service outage could have been prevented. Flexera’s State of the Cloud Report reveals that 84% of all respondents list managing cloud spend as their top cloud challenge and waste up to 27% per year.

Without visibility into actual utilization patterns (real CPU loads, memory consumption, storage growth trends), capacity planning becomes expensive guesswork. Teams either throw hardware at problems reactively or make purchasing decisions based on outdated assumptions rather than data-driven forecasts.

“In my experience managing a hybrid datacenter, proactive CPU and disk-I/O alerts cut unplanned downtime by nearly 50%. Additionally, during a ransomware recovery event I supported, having real-time visibility into unaffected nodes allowed us to restore critical workloads hours faster, directly preserving SLAs.”

Ruben Castellano Gonzalez

The root cause is often mundane, such as blind spots where teams simply don’t have eyes on critical systems. A server’s CPU climbs steadily toward saturation, disk I/O degrades over days, memory leaks slowly consume resources. Without continuous monitoring, these warning signs go unnoticed until something breaks catastrophically.

Security blind spots you can’t see

Perhaps most dangerously, lack of infrastructure monitoring creates invisible security vulnerabilities. Data breaches are already expensive, but without the right monitoring tools in place, you could suffer an extra $2.2 million in breach costs, according to IBM.

The problem intensifies as IT and operational technology converge. In fact, experts believe that by 2025, cyber attackers will have weaponized OT environments to harm or kill humans.

Legacy Windows servers, unpatched network devices, and shadow IT infrastructure create additional gaps. When you can’t see configuration drift, unauthorized access, or unusual traffic patterns, you can’t protect against them.

» Here’s how to use Nmap to find network blind spots

Drowning in noise, missing what matters

Even when organizations do attempt to implement monitoring, there’s a huge chance they drown in overwhelming noise that obscures genuine problems. Orca Security found that more than half of respondents (around 55%) said their teams had missed critical alerts due to ineffective alert prioritization.

Tool sprawl makes this worse. Enterprise IT environments often accumulate multiple, unintegrated monitoring platforms; usually one for cloud, another for on-premises, and a third for network devices. This creates data silos that slow incident response and force technicians to check five different dashboards to understand what’s actually happening.

The result is that teams are in permanent reactive mode, firefighting visible crises while invisible problems accumulate in the background, waiting to become tomorrow’s emergency.

» Here are the differences between self-hosted vs. on-premises RMM and SaaS vs. on-premises software

What infrastructure monitoring actually does

Infrastructure monitoring is the continuous tracking of the health, performance, and availability of all components that keep an IT environment running. For example, monitoring CPU utilization, load averages, and thread saturation with metrics like load average, context switches, thermal throttling, and interrupt rate helps teams detect bottlenecks that directly degrade application performance.

It focuses on the foundational systems (servers, networks, hypervisors, cloud resources, etc.), ensuring they stay healthy and performant. It differs from application monitoring, which examines code-level behavior, user transactions, and errors within the software itself.

In real operations, infrastructure monitoring extends far beyond servers to include:

- Networks: Routers, switches, firewalls, and the connections between systems that carry traffic. Without monitoring bandwidth utilization, packet loss, routing health, jitter, interface errors, network bottlenecks can silently degrade application performance.

- Storage systems: SAN arrays, NAS devices, and disk subsystems that house your data. Storage I/O saturation or failing drives often hide behind vague “slowness” complaints until monitoring reveals the actual bottleneck.

- Hypervisors: The virtualization layer (VMware ESXi, Hyper-V, KVM) that hosts your VMs. Hypervisor resource contention (too many VMs competing for the same physical resources) can cripple performance across multiple workloads.

- Containers: Kubernetes pods, Docker containers, and orchestration platforms that run modern applications. These ephemeral workloads require different monitoring approaches because they may only exist for a few seconds.

- Databases: SQL servers, PostgreSQL instances, MongoDB clusters, and other data stores. Database performance problems like slow queries, lock contention, and connection pool exhaustion often manifest as infrastructure issues.

- Cloud services like Azure or AWS: AWS EC2 instances, Azure VMs, managed services, and cloud-native resources. Cloud monitoring must track not just performance but also cost efficiency and service health across providers.

- Full-stack observability: This goes further by unifying logs, metrics, and traces to explain why something is happening, not just what is failing.

» Don’t believe it’s possible? Here’s how Autonomous IT operations eliminate 90% of outages

Building a monitoring system that actually works

A solid infrastructure-monitoring rollout needs both technical foundations and organizational readiness.

Step 1: Adapt your architecture to each environment

Monitoring architectures must adapt to your environment’s operational model, or you risk blind spots. Here’s what to keep in mind:

- On-premises estates require agents and SNMP because hardware-layer metrics (thermals, PSU failures, RAID status) are only exposed locally.

- Cloud environments shift the focus to API-based telemetry, service health, and cost-efficiency metrics. You’re monitoring abstracted resources where the underlying hardware is invisible, so your approach must rely on what cloud providers expose through their APIs.

- Hybrid setups need normalization layers that correlate data across both worlds.

- Containers and serverless workloads require ephemeral-aware monitoring using sidecars or eBPF because instances may only last for a few seconds. Traditional agent-based approaches can’t keep up with workloads that spin up and down hundreds of times per day.

Step 2: Establish telemetry sources to integrate monitoring with IT processes

Without reliable telemetry sources (agents on servers, API access to cloud services, and SNMP/flow visibility for networks), you leave room for data gaps that create further blind spots.

Ensuring you have the right data sources will help you define clear roles, escalation paths, and SLAs that define what “normal” means, so alerts trigger action instead of noise. Change-management policies must ensure that onboarding new assets automatically enrolls them into monitoring to avoid the common “shadow IT” gap seen in hybrid environments.

Infrastructure monitoring becomes far more valuable when embedded directly into core IT processes rather than treated as a standalone tool:

- Capacity planning depends on long-term telemetry. Instead of guessing when you’ll run out of storage or compute, you forecast based on actual consumption trends.

- Change management workflows benefit when monitoring baselines are captured before and after deployments, helping teams detect regressions early. If performance degrades after a patch or configuration change, baseline comparisons make the cause immediately obvious.

- Incident response relies on real-time alerts aligned with runbooks. Teams using automated enrichment can expect faster triage compared with manual log-scraping. When an alert fires, enriched context (recent changes, topology maps, related metrics, etc.) arrives with it.

- Business-continuity planning improves as health metrics reveal system fragility. High disk I/O, memory saturation, or unstable network links often become early warning signals that certain systems won’t survive a failover or disaster recovery scenario.

When all these practices integrate, monitoring evolves from a diagnostic tool into a predictive operational engine, shaping stability and resilience across the organization’s entire digital estate.

Step 3: Design alerts and dashboards for signal, not noise

Effective alerting and dashboard design rests on signal-to-noise control, not volume. Here’s what to keep in mind:

- Start by defining SLO-based thresholds (e.g., CPU > 85% for 5 minutes) instead of instantaneous spikes. A single spike might mean nothing and shouldn’t waste the time it takes to check, but sustained elevation means something is wrong.

- Group alerts by service, not device, to avoid tool-sprawl chaos. When your e-commerce application slows down, you need to know the service is degraded, not receive 47 individual alerts from every VM, container, and load balancer involved in that service.

- Dashboards should highlight leading indicators like latency, queue depth, and memory pressure instead of vanity metrics like total uptime percentage. Use dependency-aware layouts that map VMs > hosts > storage arrays so teams can see failure chains at a glance. When a storage array saturates, you immediately see which VMs and applications are affected.

- Incident workflows need predefined ownership, escalation paths, and automated enrichment (logs, topology, last-change data). The result is a monitoring ecosystem that informs, not overwhelms, and supports fast and confident responses.

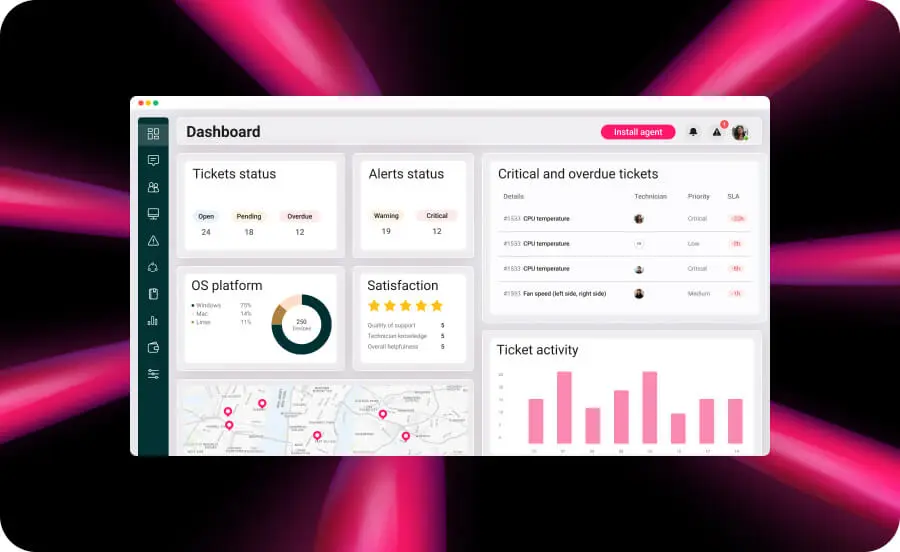

Atera’s customizable dashboards are a great option for helping you see all the important information you might need, like tickets, alerts, and more, depending on what you decide is the most important.

Step 4: Choose the right monitoring platform with automation and AI

Modern monitoring platforms leverage automation and AI to shift teams from reactive firefighting to proactive stability engineering. Agents stream real-time telemetry from servers, networks, containers, and cloud workloads. When paired with predictive analytics and anomaly detection, the right platforms can forecast resource exhaustion or unusual behavior hours before it becomes an outage.

Self-healing autonomous endpoint management workflows (restarting services, clearing corrupted caches, rebalancing workloads) shave minutes off MTTR and free staff to handle higher-value tasks.

But you should watch for over-automation, blind trust in machine learning, and “black-box” alerting with no explainability. Strong governance, transparent tuning, and periodic rule audits keep automation powerful rather than risky. Organizations evaluating monitoring tools should focus on five critical criteria:

- Scalability: The tool must scale from a handful of servers to thousands, including cloud VMs, containers, network devices, and edge hardware, without performance degradation.

- Hybrid and cloud support: Many enterprises operate mixed architectures. Choose solutions that natively connect AWS, Azure, on-premises, and container telemetry without requiring separate tools for each environment.

- Unified visibility: Most mid-size enterprises maintain multiple monitoring tools, creating fragmentation and slowing incident response. Unified dashboards and vendor-agnostic device coverage prevent “tool sprawl.”

- Integration flexibility: APIs, webhooks, and plugins ensure the tool fits existing orchestration, SIEM and IT ticketing systems. Monitoring that operates in isolation becomes an information silo rather than an operational asset.

- Vendor reliability: Make sure the platform offers regular updates, comprehensive documentation, and SLA-backed support. You’re trusting it with visibility into your entire infrastructure, so you should choose vendors who treat that responsibility seriously.

Atera aligns with these criteria through its unified platform approach: it monitors endpoints, servers, and network devices from a centralized console integrated with the RMM platform, consolidates alerts into a unified dashboard, and integrates monitoring directly with ticketing, patch management, and automation workflows. The platform’s threshold-based alerting and auto-healing scripts enable proactive responses. For example, when CPU usage exceeds defined limits or services crash, automated remediation executes immediately without waiting for manual intervention.

Compared with managing multiple point solutions, Atera’s unified architecture eliminates integration gaps by bundling RMM, ticketing, and automation in a single platform. AI Copilot assists technicians with remediation guidance and generating remediation scripts from natural language instructions, while IT Autopilot handles routine end-user requests autonomously, reducing the support workload that would otherwise require manual technician intervention.

» Learn more about Autonomous IT and autonomous vs. automated

Turn monitoring into a competitive advantage

Modern IT infrastructure is too complex, too distributed, and too critical to manage blindly. The organizations that thrive aren’t the ones with the most infrastructure; they’re the ones who can see their infrastructure clearly, predict problems before they escalate, and respond to incidents in minutes instead of hours.

Infrastructure monitoring transforms IT from a reactive cost center into a proactive business enabler. It prevents expensive outages, eliminates wasted cloud spend, strengthens security posture, and frees teams to focus on strategic work instead of firefighting.

Atera provides a unified platform that consolidates infrastructure monitoring tools, ticketing, automation, and AI-powered insights into a single solution. Its agentic AI architecture with AI Copilot and IT Autopilot help teams detect IT issues earlier, respond faster, and automate routine tasks, eliminating up to 40% of IT workload while reducing major outages.

» Interested? Try Atera for free

Related Articles

What is IT Management

IT downtime costs thousands of dollars per minute, yet most companies don't realize they have a management problem until systems fail. Without structured IT management, you face productivity hemorrhage, security breaches from unpatched vulnerabilities, and technical debt that consumes IT budgets. Technology should be a business enabler, not a constant crisis.

Read nowCapEx vs. OpEx

Misclassifying IT spending as CapEx or OpEx inflates profits short-term but distorts financial reporting, tax liability, and strategic decisions. Bad enough, this could be extremely costly and time-consuming to fix. Getting it right determines whether your IT investments align with cash flow, growth trajectory, and compliance requirements.

Read nowHow to restart a remote computer using Windows

Remote Windows restarts fail when network connectivity drops, permissions aren't configured correctly, or firewall rules block critical services. This guide covers every method (from Command Prompt and PowerShell to enterprise tools like Intune and SCCM) plus backup strategies for when systems freeze or disconnect from the domain.

Read nowHow to Use Nmap to Find Network Blind Spots and Vulnerabilities

Your network has blind spots, such as unauthorized devices, exposed services, and vulnerabilities that only scanning reveals. Nmap provides powerful visibility, but scaling it means managing complex scripts and scheduled tasks that can be simplified with comprehensive IT tools.

Read nowEndless IT possibilities

Boost your productivity with Atera’s intuitive, centralized all-in-one platform