Table of contents

Table of contents

- What is Retrieval-Augmented Generation (RAG)?

- What is Fine-Tuning in LLMs?

- How does Fine-Tuning work?

- Benefits of Fine-Tuning

- Challenges of Fine-Tuning

- RAG vs. Fine-Tuning: Key differences

- How Atera Incorporates AI for IT Management

- Atera’s unique positioning

- Choosing the right approach

Generate summary with AI

As large language models (LLMs) transform industries, organizations face a crucial question: how can these models be optimized to meet specific needs? Two leading approaches, Retrieval-Augmented Generation (RAG) and fine-tuning, offer distinct paths to unlock the full potential of LLMs.

While RAG integrates external knowledge dynamically, fine-tuning customizes the model by updating its internal parameters. While both techniques enhance performance, they cater to distinct use cases and challenges.

In this article, we’ll compare RAG vs. Fine-Tuning in depth, explore their unique benefits and limitations, and help you identify the best option for your needs.

What is Retrieval-Augmented Generation (RAG)?

Retrieval-augmented generation (RAG) is an advanced method used in AI systems to enhance the accuracy and relevance of responses from large language models (LLMs). Instead of relying solely on the static knowledge encoded during the model’s training, RAG dynamically retrieves relevant information from external data sources, such as databases, knowledge bases, or documents, and integrates it into the model’s output generation.

This hybrid approach enhances the relevance and accuracy of responses while keeping models lightweight and scalable.

What is Fine-Tuning in LLMs?

Fine-tuning refines a pre-trained LLM for a specific domain or task by training it on additional, specialized datasets. It “locks in” domain-specific knowledge for tasks requiring precision and expertise.

How does Fine-Tuning work?

| Step | Description |

| Data Collection | Compile a domain-specific dataset. |

| Training Process | Optimize the model using these new datasets. |

| Evaluation | Assess and refine the performance model. |

Benefits of Fine-Tuning

Fine-tuning LLMs offers transformative benefits for organizations seeking precision, customization, and control in their AI applications. By tailoring models to specific needs, businesses can unlock unparalleled accuracy and maintain strict data privacy.

Unparalleled precision

Fine-tuning enables laser-focused customization of LLMs to meet specific industry or organizational needs. Whether diagnosing rare medical conditions or generating highly technical documentation, fine-tuned models excel in delivering precise, context-aware results. Unlike generic models, they thrive on specialized datasets, ensuring the highest level of accuracy for niche applications.

Bespoke solutions for unique challenges

Every organization faces challenges that off-the-shelf solutions can’t always address. Fine-tuning empowers businesses to shape LLMs around their proprietary data, creating tools that reflect their unique workflows, customer needs, and industry nuances.

This customization offers a competitive edge, unlocking insights and efficiencies unavailable to generic implementations.

Data Privacy and Control

In an era of heightened data sensitivity, fine-tuning ensures critical information remains secure. Organizations can train their LLMs in-house or on private infrastructure, minimizing exposure to external systems. This approach aligns with compliance requirements and builds customer trust by keeping confidential data where it belongs—within the organization.

Challenges of Fine-Tuning

Fine-tuning delivers exceptional precision but comes with notable challenges that organizations must consider. From resource demands to limited adaptability, these trade-offs can impact its suitability for dynamic or evolving use cases.

Resource-intensive demands

Fine-tuning is no small feat—it requires robust computational infrastructure, substantial storage, and skilled teams to manage training processes. This can be a hurdle for smaller organizations or those new to AI, as the time and resources needed for proper implementation may outstrip initial expectations.

Limited flexibility

While fine-tuned models are exceptionally accurate within their specialized domains, they often struggle when tasked with general queries or topics outside their training data. This rigidity makes them less adaptable in rapidly evolving industries where staying up-to-date is crucial, requiring frequent retraining to maintain relevance.

By weighing these benefits and challenges, organizations can determine if fine-tuning is the right approach for their needs or if alternatives like RAG may be a better fit.

RAG vs. Fine-Tuning: Key differences

| Feature | RAG | Fine-Tuning |

| Adaptability | Dynamic and real-time updates | Static knowledge; requires retraining |

| Cost | Cost-effective without retraining | Expensive due to computational needs |

| Use Cases | Real-time queries, FAQs | Domain-specific tasks, custom chatbots |

Choosing the right technique

When optimizing workflows with AI, selecting the right technique depends on your specific needs. Whether you prioritize real-time updates and extensive data access or require precision in specialized tasks, understanding the strengths of RAG and Fine-Tuning is key.

- Opt for RAG if you need live updates and access to vast knowledge bases.

- Choose Fine-Tuning for highly specialized tasks where precision is critical.

How Atera Incorporates AI for IT Management

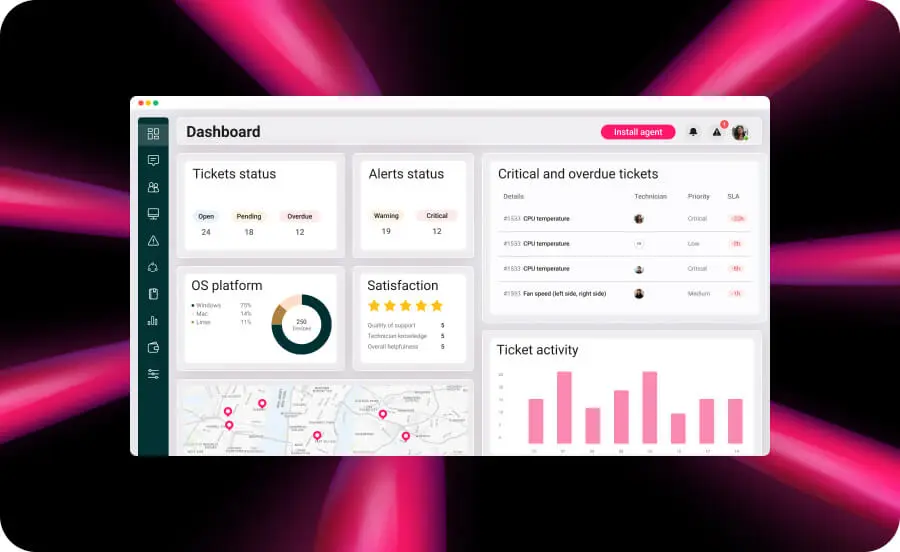

Through its Network monitoring capabilities, Atera ensures that IT systems run smoothly, enabling proactive management with the help of real-time insights.

Atera’s AI-Powered Features:

- Autonomous IT Management: Agentic AI enables Atera to predict IT issues and take proactive measures before they impact business operations. By integrating real-time data retrieval (akin to RAG), Atera ensures that IT teams always have the most relevant and up-to-date insights.

- Adaptive Learning and Optimization: By fine-tuning AI models on historical IT support data, Atera enhances problem resolution accuracy, reducing downtime and increasing efficiency.

- Smart Automation and Decision-Making: Atera’s AI-driven automation tools streamline ticket resolution, patch management, and system monitoring, freeing up IT teams for strategic tasks.

- Personalized AI Assistance: By combining the principles of Fine-Tuning with real-time data retrieval, Atera delivers AI-powered insights tailored to specific IT infrastructures, improving operational efficiency.

This unique integration of Agentic AI, RAG, and Fine-Tuning sets Atera apart, offering a comprehensive AI-driven IT management solution that evolves dynamically with changing technological needs.

Atera’s unique positioning

Competitors like SolarWinds and NinjaOne also integrate AI, but Atera differentiates itself with a cohesive, all-in-one approach. While other platforms may excel in singular aspects like monitoring, Atera combines monitoring, automation, ticketing, and reporting in a unified dashboard.

This holistic strategy mirrors the versatility of RAG, providing flexibility and scalability that cater to IT professionals’ evolving needs.

Choosing the right approach

RAG and Fine-Tuning each offer unique advantages:

- RAG is ideal for real-time knowledge and dynamic adaptability.

- Fine-Tuning shines in precision tasks and domain-specific solutions.

For IT professionals, understanding these techniques enables smarter decisions for business automation and innovation.

Atera’s AI-powered features illustrate how modern platforms can seamlessly incorporate these principles, streamlining IT management and boosting productivity.

Take the next step with Atera: Explore its AI-powered features and transform your IT workflow!

Try Atera for 30 days for free or book a demo today!

Related Articles

The AI Startups Turning South Korea Into a Global Innovation Powerhouse

South Korea is rapidly emerging as one of the world’s most dynamic AI innovation hubs, fueled by strong government investment, advanced semiconductor manufacturing, and a growing startup ecosystem. From healthcare diagnostics and service robotics to generative AI and next-generation chips, a new wave of companies is shaping how artificial intelligence is applied across industries. This article highlights six South Korean AI companies gaining worldwide attention and the factors driving the country’s expanding role in the global AI economy.

Read nowThe Japanese AI Companies That Could Change Global Tech

Japan is rapidly emerging as a major hub for artificial intelligence innovation, with startups applying AI across manufacturing, healthcare, logistics, and enterprise automation. Companies like Preferred Networks, ABEJA, and ExaWizards are using machine learning and data intelligence to solve real-world business challenges. As labor shortages, robotics expertise, and government investment accelerate adoption, Japan’s AI sector offers valuable insights into the future of global technology. Here are eight Japanese AI companies gaining attention, along with some reasons business leaders should be watching them.

Read nowThe Chinese AI Surge That’s Redefining Global Competition

China’s AI sector has quickly evolved into one of the most influential forces in global innovation. With more than 5,300 companies operating across a wide range of industries, China is helping redefine what large-scale AI deployment looks like. These rising innovators are accelerating AI commercialization, compressing innovation cycles, and raising the competitive bar for businesses worldwide.

Read nowThe French AI Boom You Can’t Afford to Ignore in 2026

France has rapidly become one of Europe’s most influential AI growth markets, backed by major public investment and a surge in enterprise adoption. From generative AI and drug discovery to insurance automation and defense systems, French innovators are building globally competitive solutions across industries. Here’s a closer look at the companies helping position France at the center of the next wave of artificial intelligence.

Read nowEndless IT possibilities

Boost your productivity with Atera’s intuitive, centralized all-in-one platform