Table of contents

Generate summary with AI

Every IT leader has felt the pressure to “adopt AI.” But the real question isn’t whether the technology can help your team. It’s whether the model behind the tool has been trained well enough to earn your trust. Understanding how AI training works can help you find the right tool for your IT environment.

Key Takeaways

- AI models learn patterns from large, diverse datasets through repeated training cycles.

- Training involves four core phases: pre-training, fine-tuning, evaluation, and continuous post-deployment learning.

- High-quality data, human oversight, and robust testing prevent hallucinations and bias.

- For IT use, AI training needs to emphasize continuous learning, updating models to recognize emerging threats.

How AI Models Are Trained

AI models aren’t born intelligent. They have to be trained. But these systems use machine learning and deep learning, which don’t follow rule-based instructions. They learn by identifying patterns across massive datasets. These neural networks simulate how humans absorb information — by seeing many examples, recognizing relationships within those examples, and improving their accuracy over time.

This type of training typically includes three pillars:

- Data: This is the foundation of AI model training. AI models require large, diverse, and high-quality datasets.

- Algorithms: The instructions that guide AI learning. AI uses mathematical-based logic to identify patterns and improve accuracy over time.

- Compute: The processing resource behind AI learning. Modern AI models need GPUs, TPUs, or distributed systems to handle large workloads.

IT professionals don’t need to be AI experts to use today’s tools, but having a working knowledge of the training process can prove useful. You’ll be better able to evaluate vendor claims, verify AI safety, and select reliable platforms.

Phase 1: Pre-Training

Pre-training is the first and most intensive stage of AI development. This is where the model builds its foundational understanding, learning broad patterns, relationships, and structures before more specific tuning begins. During this phase, the model develops the general reasoning abilities it will later refine for specialized use cases.

What Happens During Pre-Training?

To build its baseline knowledge, the model ingests large datasets while pre-training. This includes texts, logs, images, and telemetry. It’s during this part of the process where a language model begins recognizing patterns, relationships, and underlying structures.

For example, a model might consume documentation, public datasets, books, and code repositories to develop a strong understanding of language and structure. In contrast, anomaly detection models rely more on historic network traffic and endpoint logs, learning what “normal” activity looks like so they can identify deviations later.

While pre-training, engineers use objectives such as:

- Next-token prediction: This core training method teaches a model to guess the next word or element in a sequence.

- Classification tasks: The model learns to sort data into categories, such as distinguishing benign traffic from malicious activity.

- Reconstruction tasks: Autoencoders rebuild missing data, a technique used to strengthen the model’s understanding of structure.

Pre-training is the most resource-intensive phase, often running for days or weeks across large GPU clusters.

Why Strong Pre-Training Leads to Better IT Automation

In the information technology (IT) field, stronger pre-training means:

- Deeper understanding of system and network behavior

- More precise automation triggers

- Improved troubleshooting recommendations

- Fewer false positives and negatives

Today’s IT teams often use AI for remote monitoring and management and professional services automation. A well-trained system provides the foundational reasoning necessary to understand diverse logs, scripts, and error patterns.

Phase 2: Fine-Tuning

The next step is to adjust the model to fit specific use cases. You may need a model to summarize tickets or identify suspicious activity, for instance.

How Fine-Tuning Works

Fine-tuning uses smaller, high-quality datasets to shape the model’s behavior. These datasets are often expert labeled. Human specialists with domain knowledge review the data and mark the correct answers so the model learns from verified examples.

The process typically uses these methods:

| Fine-Tuning Method | What It Does | Common Use Cases |

| Supervised Fine-Tuning | Trains the model to produce responses in the format users expect | Ticket summarization, troubleshooting suggestions |

| Reward Model Training | Builds a model that converts human preferences into a numerical reward signal | Prioritizing alert explanations, refining root-cause insights |

| Policy Optimization | Updates the model’s behavior based on reward signals while avoiding unstable swings or major unintended changes. | Ensuring generated scripts are safe, stabilizing automated recommendations |

How Fine-Tuning Adapts AI Models for IT Workflows

In the IT field, effective fine-tuning leads to:

- More accurate and context-aware ticket summaries

- Clearer, more reliable troubleshooting guidance

- Better alignment with organizational policies and workflows

- Consistent, high-quality recommendations across similar incidents

Fine-tuning bridges the gap between a capable AI model and one that fits seamlessly into your IT operations. Targeted refinement lets the model mirror how your team triages issues, documents work, and resolves incidents, making AI tools faster to adopt and easier to trust.

Phase 3: Evaluation and Testing

After an IT model is trained and fine-tuned, the next step is to put it through a rigorous evaluation and testing process. This quality assurance step verifies the model acts as intended, avoids risky actions, and supports real-world IT workflows without introducing instability. The best testing and evaluation runs the model through different environments.

Key areas of evaluation include:

- Accuracy: Does the model generate correct responses?

- Consistency: Does it behave predictably across scenarios?

- Security: Is it resistant to prompt injection or adversarial inputs?

- Bias testing: Does the model avoid unfair assumptions or inaccurate generalizations?

- Performance benchmarks: How does it perform in latency, throughput, and resource usage?

For IT use cases, evaluation may include:

- Script-generation audits

- Network and endpoint simulation

- Load testing under live monitoring

How Evaluation and Testing Protect IT Operations

In many IT environments, AI now plays a direct role in day-to-day operations, from interpreting alerts to recommending remediation steps. For these systems to be effective, the underlying models need to be thoroughly evaluated.

Strong evaluation and testing ensures:

- Safe automation that won’t deploy incorrect scripts or remediation steps

- Reliable decision support, especially during high-pressure incidents

- Accurate interpretation of logs, alerts, and device behavior

- Predictable performance, even during heavy system activity

Phase 4: Deployment and Continuous Learning

Once deployed, an AI model enters a feedback loop of real-world interaction. Continuous learning is essential because IT environments are dynamic. On an ongoing basis, your team likely deals with new threats along with your regular system updates and configuration changes.

Continuous learning may mean retraining on new logs, refining guardrails, or adjusting behaviors to prevent accuracy degradation over time. Regular monitoring keeps AI models stable and aligned with your internal policies.

Keeping AI Effective After Deployment

In the IT field, ongoing monitoring and improvement enables:

- More accurate detection of new or evolving issues

- Better alignment with live operational data

- Reduced performance drift

- Improved reliability under fluctuating workloads

Conclusion

Understanding how AI models are trained helps IT professionals determine which tools bring long-term value. Modern IT environments need AI capabilities that are secure, continuously improving, and aligned with their business goals.

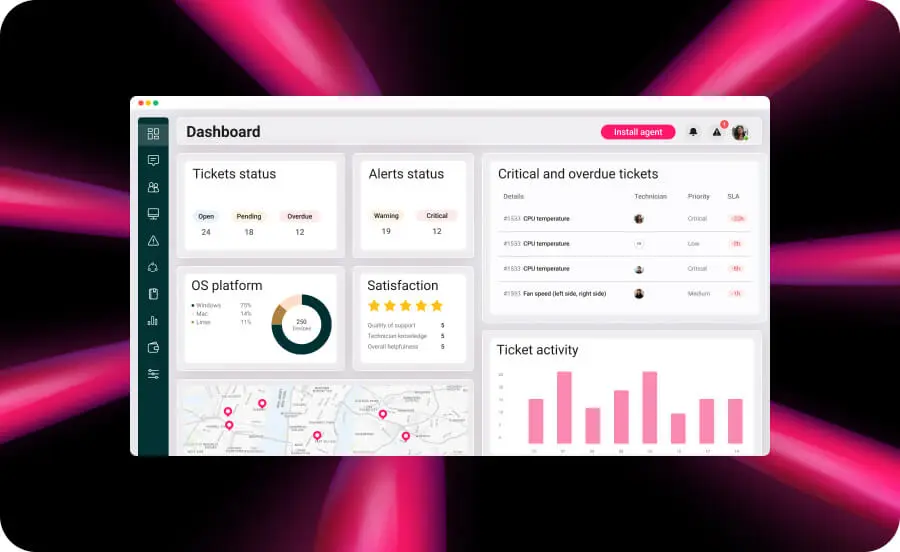

With Atera, your team can use AI tools as part of your existing workflows to drive a truly autonomous IT environment. You won’t need to worry about training models or managing specialized hardware; instead, Atera helps automate repetitive work, surface smarter insights, and speed up resolution times. This shift toward the autonomous enterprise ensures your technicians can move away from manual maintenance and focus on higher-impact, strategic tasks.

Related Articles

The AI Startups Turning South Korea Into a Global Innovation Powerhouse

South Korea is rapidly emerging as one of the world’s most dynamic AI innovation hubs, fueled by strong government investment, advanced semiconductor manufacturing, and a growing startup ecosystem. From healthcare diagnostics and service robotics to generative AI and next-generation chips, a new wave of companies is shaping how artificial intelligence is applied across industries. This article highlights six South Korean AI companies gaining worldwide attention and the factors driving the country’s expanding role in the global AI economy.

Read nowThe Japanese AI Companies That Could Change Global Tech

Japan is rapidly emerging as a major hub for artificial intelligence innovation, with startups applying AI across manufacturing, healthcare, logistics, and enterprise automation. Companies like Preferred Networks, ABEJA, and ExaWizards are using machine learning and data intelligence to solve real-world business challenges. As labor shortages, robotics expertise, and government investment accelerate adoption, Japan’s AI sector offers valuable insights into the future of global technology. Here are eight Japanese AI companies gaining attention, along with some reasons business leaders should be watching them.

Read nowThe Chinese AI Surge That’s Redefining Global Competition

China’s AI sector has quickly evolved into one of the most influential forces in global innovation. With more than 5,300 companies operating across a wide range of industries, China is helping redefine what large-scale AI deployment looks like. These rising innovators are accelerating AI commercialization, compressing innovation cycles, and raising the competitive bar for businesses worldwide.

Read nowThe French AI Boom You Can’t Afford to Ignore in 2026

France has rapidly become one of Europe’s most influential AI growth markets, backed by major public investment and a surge in enterprise adoption. From generative AI and drug discovery to insurance automation and defense systems, French innovators are building globally competitive solutions across industries. Here’s a closer look at the companies helping position France at the center of the next wave of artificial intelligence.

Read nowEndless IT possibilities

Boost your productivity with Atera’s intuitive, centralized all-in-one platform