Generate summary with AI

There’s no question that artificial intelligence, or AI, is the technological innovation of the early 21st century. In our modern world, there is no technology developing so fast with such far-reaching capabilities as AI.

When you think of AI, conversational or generative AI bots (like ChatGPT) are probably the first solutions that come to mind. But with agentic AI on the horizon, the functional and ethical implications of AI are taking a new turn.

Agentic AI refers to AI systems that can perceive, decide, and act independently. These tools gather data, make decisions, and take actions in pursuit of predefined goals. In the world of IT management, agentic AI offers incredible possibilities when it comes to automation, decision making, incident response, user access control, and so much more.

As agentic AI takes off, however, it’s crucial to stay abreast of the ethical implications of AI. In other words, what happens when machines make choices that impact people, systems, and businesses. While agentic AI unlocks powerful efficiencies in IT, it also raises urgent questions around responsibility, transparency, and control.

Why organizations use agentic AI in IT management

IT management encompasses the comprehensive oversight of an organization’s tech infrastructure. IT service management, cybersecurity, resource management, ticketing and help desk, inventory, disaster recovery, setting policies and procedures… all of these diverse IT department functions fall under the umbrella of IT management. And now, agentic AI is transforming each of these unique sectors, particularly in the realm of AI for enterprise IT.

Why are organizations turning to agentic AI for comprehensive IT management solutions? There are many benefits of using agentic AI in ITSM and beyond, including…

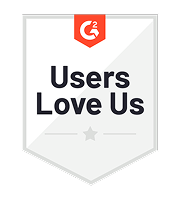

- Improved efficiency and uptime: Organizations that harness the power of agentic AI are able to equip their IT teams to be more efficient. Ticketing and help desk services offer a great example of this. Take the example of Atera’s agentic AI model, AI Copilot. Users were able to see 10X faster ticket resolution times, and many tickets were able to be resolved without human intervention at all.

Image via Atera

- Scalability: You can consider AI agents an extension of your human workforce. Their incredible capabilities allow for scalability of operations without linear headcount growth. How? Agentic AI tools can perform tasks autonomously, and for the tasks that they can’t work out on their own, they empower IT technicians to be more efficient and effective.

- Predictive decision making: In both incident management and risk mitigation, the predictive decision making capabilities of agentic AI bots are unprecedented. This allows for organizations to embrace a more proactive security posture and manage fallout if an incident does occur. The predictive decision making abilities of agentic AI tools are also relevant for inventory, purchasing, and asset/resource management decisions.

- Reduced cognitive load on IT teams: When you bring agentic AI solutions into the mix, you can free up your IT technicians to focus on complex problems that demand creative solutions. Leave the mundane, repetitive tasks and busy work to AI bots.

While there are countless benefits when it comes to using agentic AI in the world of IT, the potential power of these tools also comes with ethical considerations. Let’s take a look at the ethical implications of agentic AI in IT management.

Ethical dilemmas introduced by autonomy

When organizations integrate agentic AI tools into their operations, it’s important to keep in mind the ethical implications of AI in business. IBM and others cite the rise of agentic AI as the result of increased scrutiny on ethical AI in business and other industries. Here are some ethical dilemmas that may arise…

Accountability

Who is responsible when an AI tool makes a bad call? Part of ensuring ethical AI usage means having policies in place that make it clear who is responsible for AI oversight.

IBM notes that “the groundwork for all AI governance is human-centered” – in other words, organizational leaders and responsible teams must take responsibility when AI makes a bad decision. It’s crucial to determine how this decision was made and where the training data went wrong to stop the problem from happening again.

Bias and fairness

Virtually all AI tools have the capability to amplify the impact of biased data. If an AI solution is trained on data that is biased against a certain community or person, it will continue operating as if that bias is gospel.

Image via Zapier

Researchers at MIT published an AI risk repository that identifies “discrimination and toxicity” as one of the biggest problems facing agentic AI usage. In short, we must ask whether an AI tool can reinforce discriminatory access control or resource allocation?

Transparency

Agentic AI tools are trained to process data, make decisions, and act autonomously – but it’s still crucial for human overseers to understand the “thought process” behind these decisions and ensure the actions of AI bots align with moral principles and social values. Understanding how an AI agent gets to a certain decision can help users rectify its logic if it takes a wrong turn. In other words, can IT managers understand and audit the decisions made by the AI?

Consent and oversight

How do organizations ensure humans remain “in the loop” when it comes to the decisions and actions that agentic AI tools are implementing? Organizations concerned with the ethical implications of AI must set up processes that allow human actors an accessible look into – and ultimately, power over – AI agents.

Job displacement

One of the biggest anxieties around widespread AI adoption is job displacement – many people worry that AI bots are going to take their jobs. But which roles are truly at risk, and how should organizations manage the transition to an AI-supported workforce? Focusing on the ways that AI tools can support and supplement a human workforce is widely seen as the path forward.

Governance and regulation: What’s needed?

Now that we have a better understanding of the ethical implications of agentic AI in IT, let’s talk about governance and regulation. How can we ensure that ethical and moral stipulations are included in the work of AI agents – and if a problem occurs, how do we minimize the impact?

AI governance requires human accountability for the actions of agentic AI. While agentic AI offers an unprecedented suite of capabilities that is sure to overhaul numerous different industries, there are still gaps in AI governance for enterprise IT. As agentic AI tools prioritize certain goals, other ethical considerations can fall to the wayside. In order to ensure that agentic AI tools don’t open up your organization to legal, ethical, or discrimination concerns, it’s crucial to work toward more cohesive industry frameworks and practices.

There are some existing frameworks for responsible AI usage in place, including the NIST AI Risk Management Framework and ISO AI Standards. Many companies build out their own frameworks or company-specific practices to set clear priorities and specify unique ethical considerations that apply to them.

There are a few key components all organizations should consider when it comes to mitigating the ethical implications of agentic AI in IT. One is the need for ethical red teaming, or testing AI systems for unintended consequences. Additionally, the role of internal policy is crucial in setting guardrails for agentic AI behavior and making decisions about which AI agents to use, what tasks they should complete, and who should be accountable for their actions.

Building ethical agentic systems

With developing perspectives on the necessity of ethical agentic systems, let’s think more about the future of agentic AI and what these systems look like. Here are some parameters to explore:

- Ethical agentic AI systems should be designed to allow human override and transparency.

- Ethical agentic AI models should incorporate ethical reasoning, whether that is on a broader scale or according to company-specific parameters.

- Ethical agentic AI models should be set up to promote cross-functional collaboration between IT, legal, HR, and ethics teams.

- Ethical agentic AI systems should take into consideration the importance of continuous monitoring and audits to ensure compliance and ethical usage.

- Develop an ethical AI usage policy for you and your organization.

Image via Cut the SaaS

Ensuring ethics for a bright future of agentic AI

Agentic AI offers incredible, transformative potential across a huge variety of industries. The ability of these tools to learn, decide, and act autonomously presents virtually limitless possibilities – but with great power comes great responsibility. As agentic AI users, we have a shared duty to develop and deploy these systems ethically.

Engaging in ongoing dialogue about the ethical implications of agentic AI in IT management is a crucial part of investing in the ethics of these systems in the future. Additionally, designing and investing in these systems to be aligned with human values and organizational integrity is paramount. As you consider integrating agentic AI into your workforce, make sure you choose a tool from a company that is committed to ethical AI usage.

With Atera’s AI Copilot, you can trust in an ethical agentic AI tool made to help free up your team, boost productivity, and future-proof your IT operations. Want to take AI Copilot for a test drive? Test out our full suite of Autonomous IT tools with a 30-day free trial, no credit card required.

Related Articles

The AI Startups Turning South Korea Into a Global Innovation Powerhouse

South Korea is rapidly emerging as one of the world’s most dynamic AI innovation hubs, fueled by strong government investment, advanced semiconductor manufacturing, and a growing startup ecosystem. From healthcare diagnostics and service robotics to generative AI and next-generation chips, a new wave of companies is shaping how artificial intelligence is applied across industries. This article highlights six South Korean AI companies gaining worldwide attention and the factors driving the country’s expanding role in the global AI economy.

Read nowThe Japanese AI Companies That Could Change Global Tech

Japan is rapidly emerging as a major hub for artificial intelligence innovation, with startups applying AI across manufacturing, healthcare, logistics, and enterprise automation. Companies like Preferred Networks, ABEJA, and ExaWizards are using machine learning and data intelligence to solve real-world business challenges. As labor shortages, robotics expertise, and government investment accelerate adoption, Japan’s AI sector offers valuable insights into the future of global technology. Here are eight Japanese AI companies gaining attention, along with some reasons business leaders should be watching them.

Read nowThe Chinese AI Surge That’s Redefining Global Competition

China’s AI sector has quickly evolved into one of the most influential forces in global innovation. With more than 5,300 companies operating across a wide range of industries, China is helping redefine what large-scale AI deployment looks like. These rising innovators are accelerating AI commercialization, compressing innovation cycles, and raising the competitive bar for businesses worldwide.

Read nowThe French AI Boom You Can’t Afford to Ignore in 2026

France has rapidly become one of Europe’s most influential AI growth markets, backed by major public investment and a surge in enterprise adoption. From generative AI and drug discovery to insurance automation and defense systems, French innovators are building globally competitive solutions across industries. Here’s a closer look at the companies helping position France at the center of the next wave of artificial intelligence.

Read nowEndless IT possibilities

Boost your productivity with Atera’s intuitive, centralized all-in-one platform