Generate summary with AI

It could be a normal Tuesday when your phone lights up with complaints that the customer portal is “completely frozen,” and by the time you pull up the monitoring dashboard, you’ve got 47 open tickets and counting. The culprit? A single poorly-optimized query grinding your database to a halt, taking down half your application stack with it. For a mid-sized company, incidents like this add up fast, let alone enterprise IT infrastructures. Persistent database slowdowns cost outrageous amounts of money while your team burns hours chasing symptoms instead of solving root causes.

Database performance problems don’t announce themselves with flashing alarms. They creep in gradually through bloated indexes, outdated statistics, and infrastructure that’s barely keeping pace with growth. But here’s the good news: performance optimization isn’t guesswork. It’s systematic, measurable, and entirely achievable once you understand what’s actually slowing you down and how to fix it.

Here’s everything you need to know about understanding your database performance and how to optimize it.

The hidden costs of poor database performance

Poor database performance isn’t just a technical issue. It quietly erodes revenue, productivity, and customer trust. When your database struggles, the ripple effects touch every part of your business.

For example, when core applications slow down because the database is struggling, users abandon transactions, conversion rates drop, and customer satisfaction plummets. E-commerce studies show that even a few extra seconds of delay can significantly reduce conversions and compound to millions of dollars annually in lost productivity and revenue.

Employees waste time waiting for reports, screens, and batch jobs to load. Those delays accumulate into hours per week per person, which is time that could be spent serving customers or executing strategic work instead of staring at loading screens.

The trap of IT firefighting teams

When databases are underperforming, IT teams get trapped in constant firefighting mode. They’re chasing incidents, reacting to outages, and doing late-night emergency tuning. According to Cyber Defense Magazine, this reactive cycle drives burnout, stalls modernization projects, and crowds out strategic initiatives like refactoring schemas, improving observability, or adopting automation.

“Emergency fixes for performance incidents can cost up to 10x more than proactive optimization.”

This is because once you factor in overtime, external consultants, and business disruption, your best people spend their expertise putting out fires rather than building the systems that prevent them. And it doesn’t stop there. These issues usually cascade across infrastructures.

Slow databases rarely fail in isolation. As queries back up, applications retry more often, connection pools fill, and upstream services start to struggle. This forces teams to overprovision compute, memory, and storage just to stay afloat. That “throw hardware at it” response pushes cloud and licensing bills up without actually addressing root causes, so incidents keep recurring.

Organizations end up paying for larger instances, faster disks, and more replicas simply to mask underlying design and tuning issues. This undermines the ROI of their data platforms while the real problems continue festering beneath the surface.

» Don’t miss our guide to IT cost optimization

What “improving database performance” actually looks like

The good news? Database performance isn’t mysterious. It’s systematic, measurable, and entirely within your control once you understand what drives it.

Performance improvement starts with understanding what you can actually control. Database performance depends on two categories of factors: internal system resources and external environment conditions:

Internal system factors include the hardware and software components that directly execute your queries:

- CPU speed determines query execution speed, which means more transactions per second. Higher CPU clock speed and more cores increase the number of transactions the database can process per second, accoding to Several Nines.

- Memory (RAM) is equally critical because adequate RAM reduces disk reads, while insufficient memory can slow queries significantly. More RAM allows databases to cache working sets in memory.

- Disk I/O throughput directly affects read and write speed, with SSDs offering approximately 10 times faster IOPS than traditional HDDs, according to TV Tech.

- Index structures can reduce query times from seconds to milliseconds when properly designed, according to the International Journal for Science and Research, but poor indexing can increase latency significantly.

- Cache efficiency minimizes repeated queries, with high cache hit efficiency delivering roughly 2.7x speedup (~63%).

External environment factors shape how your database interacts with the broader infrastructure:

- Workload mix matters because OLTP and OLAP workloads stress systems differently, which means that mixed workloads can actually reduce efficiency.

- Concurrency affects performance as high user concurrency increases lock contention and reduces performance.

- Network latency adds milliseconds per query, with cloud-hosted databases potentially facing several orders of magnitute of added delay (such as going from 0.1ms to 10ms), according to Nexperteam.

- Network bandwidth limitations throttle throughput. Gigabit networks support around 1,000 MB/s compared to less than 100 MB/s on older setups.

These factors are measurable and tunable. Once you understand which ones are constraining your system, you can make targeted improvements rather than guessing.

» Learn more about specific metrics with our guide to IT benchmarking

10 strategies to optimize database performance

Here are 10 proven techniques that deliver measurable performance improvements across different database components.

1. Index optimization

This means creating and maintaining database indexes (data structures that speed up data retrieval) to help queries find information faster without scanning entire tables. It focuses on the query engine, indexing subsystem, and storage engine to impact optimizer plan selection, reduce table and page scans, and lower buffer-pool churn.

The goal is to shorten execution paths and reduce I/O by enabling selective access to rows.

Here’s how to implement this strategy:

- Identify slow queries: Use EXPLAIN/ANALYZE and review top wait events to find queries that need optimization.

- Design targeted indexes: Create composite indexes that match your filter conditions (WHERE clauses) and sort operations (ORDER BY clauses).

- Add covering indexes: For frequently-run queries, create indexes that include all columns needed so the database doesn’t have to look up additional data

- Test before deploying: Run EXPLAIN on your queries before and after adding indexes to verify the improvement.

- Clean up redundant indexes: Remove any unused or duplicate indexes that slow down write operations.

- Watch for common mistakes: Avoid over-indexing (too many indexes hurt insert/update performance), misordered composite key columns (wrong order reduces effectiveness), and outdated statistics (causes the optimizer to make poor decisions).

2. Query rewriting

This means restructuring SQL queries to use more efficient syntax and logic that the database can execute faster. It focuses on the query engine, optimizer, indexing subsystem, and buffer pool to lower CPU usage per query, reduce page fetches, and improve plan stability.

The goal is to shorten execution paths and reduce CPU and reads.

Here’s how to implement this strategy:

- Profile slow queries: Extract predicates and join conditions to understand what’s slowing them down. Use database tools like EXPLAIN or EXPLAIN ANALYZE to see how your query actually executes. This reveals whether it’s scanning entire tables, using the wrong indexes, or performing expensive operations like sorting or joining large datasets.

- Remove non-sargable predicates: Rewrite queries that use functions on indexed columns (like UPPER(column) = ‘X’) to use index-friendly syntax (like column LIKE ‘x%’).

- Replace SELECT * with specific columns: Only request the columns you actually need to reduce data transfer.

- Use keyset pagination instead of OFFSET: For large result sets, keyset pagination performs much better than OFFSET.

- Validate improvements: Check runtime metrics (duration, reads, CPU) to confirm your changes worked.

» Here’s how to increase IT efficiency in your organization

3. Normalization and denormalization balance

This means organizing data tables to eliminate redundancy (normalization) while strategically duplicating some data (denormalization) to avoid expensive joins. It focuses on the storage engine, query engine, and indexing subsystem to control join depth and row width, affecting page density and buffer-pool efficiency.

The goal is to diminish contention and reduce join cost while preserving data integrity.

Here’s how to implement this strategy:

- Map your workload patterns: Identify which tables are read-heavy versus write-heavy and where expensive joins occur.

- Normalize transactional tables: Keep core transactional entities normalized to prevent update anomalies and reduce write contention.

- Denormalize read-heavy aggregates: Create materialized views or precomputed rollups for frequently-accessed summary data.

- Add incremental refresh logic: Use triggers or scheduled jobs to keep denormalized data synchronized with source tables.

- Monitor the balance: Watch for over-denormalization (increases update complexity) and excessive joins (inflates latency).

4. Partitioning and sharding

This means splitting large database tables into smaller, more manageable pieces either within one database (partitioning) or across multiple servers (sharding). It focuses on the storage engine, query engine, and hardware/distribution layer to reduce index depth, lower per-partition contention, and enable parallel scans.

The goal is to increase parallelism and shrink per-query working sets.

Here’s how to implement this strategy:

- Choose the right partition key: Select a key tied to your access patterns, such as date ranges for time-series data or tenant ID for multi-tenant systems.

- Implement partition pruning: Configure your database to skip irrelevant partitions when executing queries.

- Use local indexes: Create indexes within each partition rather than global indexes across all partitions.

- Monitor for hotspots: Watch for skewed partition keys that concentrate activity on specific shards.

- Plan for growth: Add tiering for old data and establish a rebalancing strategy as data volume grows.

» Looking for servers? Here are the best server racks

5. Application-level caching

This means storing frequently accessed data in fast memory (like Redis) so applications don’t need to repeatedly query the database for the same information. It focuses on the application caching layer, network, and query engine (indirectly) to offload repeated reads, stabilize database load, and improve p95 latency.

The goal is to reduce I/O and CPU by serving hot data from memory.

Here’s how to implement this strategy:

- Identify cacheable data: Look for read operations that are idempotent and have predictable access patterns (like product catalogs or user profiles). Idempotent means the operation produces the same result every time it’s called. For example, fetching a user’s profile by ID always returns the same data until that profile is updated, making it perfect for caching.

- Set up Redis with namespaced keys: Implement a caching layer using Redis with clear key naming conventions and appropriate TTL (time-to-live) settings. Redis is an in-memory data store that acts as a high-speed cache between your application and database. Key naming conventions organize your cached data (like “user:12345” or “product:SKU-789”), while TTL settings determine how long data stays in cache before expiring (for example, 300 seconds for relatively static data or 60 seconds for frequently changing data).

- Wire cache invalidations: Ensure cached data is refreshed or removed when the underlying database records change. You can do this by adding logic to your application code that deletes or updates the cache entry whenever you update the database record, or by using database triggers that notify your caching layer when changes occur.

- Monitor cache performance: Track hit/miss ratios and watch for stale data issues when the cached version doesn’t match the current database version. You can identify this by tracking the timestamps of cached entries versus database updates, monitoring user complaints about seeing outdated information, or running periodic validation checks that compare cache contents against the source database.

- Handle cache failures gracefully: Avoid cache stampede (when many requests hit the database simultaneously after cache expiration) with proper locking mechanisms.

6. Buffer pool tuning

This means allocating the right amount of RAM for the database to hold frequently accessed data pages in memory instead of reading from disk. It focuses on the buffer pool, memory manager, and storage engine to directly affect logical versus physical reads and checkpoint behavior.

The goal is to reduce disk I/O and average wait times by increasing cache hit ratios.

Here’s how to implement this strategy:

- Measure current performance: Track cache hit ratio, page life expectancy, and I/O wait times to establish your baseline.

- Increase buffer allocations: Add more RAM to your buffer pool to keep more data pages in memory (target cache hit ratios above 90%).

- Align page size with workload: Set page sizes appropriate to your typical row width and access patterns.

- Reassess after other optimizations: After implementing index and query fixes, re-evaluate buffer pool needs as the workload may have changed.

- Avoid overcommitting memory: Don’t allocate so much RAM to the buffer pool that the system starts swapping, and pay attention to NUMA architecture on multi-socket systems. A safe rule is to leave 10-20% of total system RAM free for the operating system and other processes. If you allocate 95% to your database buffer pool, the OS may start swapping to disk, which destroys performance. On NUMA (Non-Uniform Memory Access) systems with multiple CPU sockets, ensure your database is configured to allocate memory local to each CPU socket rather than accessing remote memory across sockets, which adds latency.

7. Storage optimization

This means upgrading to faster storage hardware (SSDs/NVMe) and configuring storage systems (RAID) to handle database reads and writes more efficiently. It focuses on the hardware layer, storage engine, and log subsystem to impact fsync latency, checkpoint duration, and recovery speed.

The goal is to increase throughput and lower I/O wait times.

Here’s how to implement this strategy:

- Profile your I/O patterns: Understand your read/write mix to choose the right storage configuration. For example, if your database handles 80% reads and 20% writes, you might prioritize read-optimized storage, while a write-heavy workload (like logging or real-time data ingestion) benefits more from storage configurations that handle sequential writes efficiently, like NVMe with optimized write caching.

- Separate logs from data: Isolate write-ahead logs and data files on separate storage devices to reduce contention.

- Choose appropriate RAID levels: Use RAID 10 for write-heavy OLTP workloads (balanced performance) and verify controller cache and battery backup.

- Migrate to SSD or NVMe: Move hot data and transaction logs to solid-state storage for order-of-magnitude IOPS improvements.

- Tune log settings: Adjust WAL or redo log segment sizes and sync frequency to match your workload requirements. WAL (Write-Ahead Log) or redo logs record all database changes before they’re written to disk, ensuring data durability. You can tune segment sizes (larger segments reduce the overhead of creating new log files but use more disk space) and sync frequency (how often logs are flushed to disk, where syncing after every transaction maximizes safety but hurts performance).

8. Transaction isolation tuning

This means adjusting how the database handles simultaneous transactions to reduce conflicts and waiting between concurrent users. It focuses on the transaction manager, lock subsystem, and query engine to directly affect waits, deadlocks, and TPS under high concurrency.

The goal is to diminish lock contention and improve transaction throughput.

Here’s how to implement this strategy:

- Measure current contention: Track lock waits, deadlocks, and identify long-running transactions in your system.

- Use MVCC where appropriate: Implement Multi-Version Concurrency Control or row-level locking to reduce blocking. MVCC allows readers to see consistent snapshots of data while writers make changes simultaneously, meaning queries don’t have to wait for locks to be released before they can read data.

- Tune isolation levels: Consider READ COMMITTED or SNAPSHOT isolation instead of stricter levels like SERIALIZABLE for OLTP workloads. Isolation levels control how much transactions can “see” of each other’s uncommitted changes, so stricter levels prevent more data inconsistencies but create more blocking, while looser levels allow better concurrency at the cost of some consistency guarantees.

- Shorten transaction scopes: Break long batch transactions into smaller units to reduce the time locks are held.

- Add retry logic: Implement retries with exponential backoff for transactions that encounter conflicts.

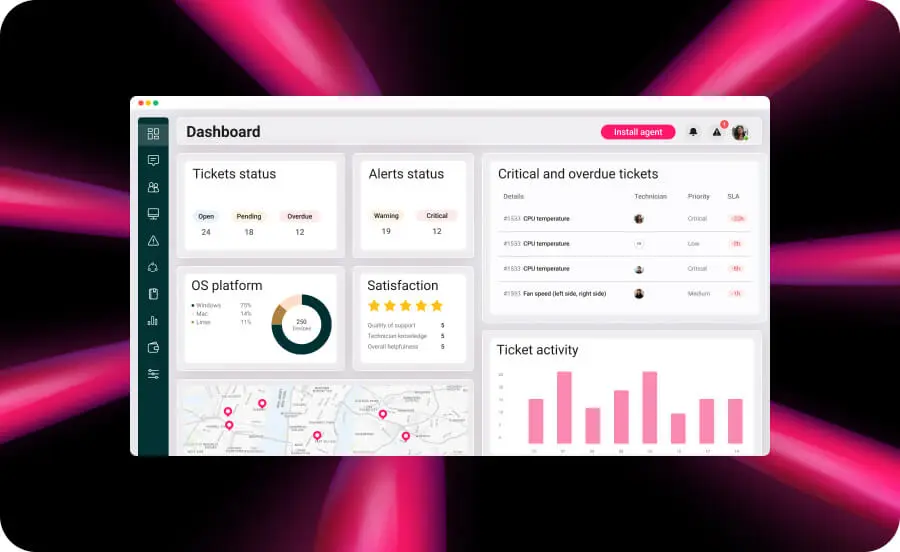

9. Monitoring and observability

This means setting up systems to continuously track database performance metrics and alert teams when problems arise. By utilizing PC performance monitoring software, you can gain deep visibility into the observability stack, query engine, buffer pool, and storage.

This integration enables proactive remediation across the full pipeline—covering both the database and the underlying infrastructure—with the ultimate goal of shortening time-to-detect and preventing regressions before they impact the end user.

Here’s how to implement this strategy:

- Define your SLOs: Establish service level objectives for p95 latency, TPS, I/O waits, and lock waits that align with business requirements.

- Configure dashboards and alerts: Set up monitoring tools with clear visualizations and alerts for when metrics exceed thresholds.

- Add query-level profiling: Track individual query performance to catch plan changes and regressions.

- Create runbooks: Document response procedures for common alerts so your team knows exactly what to do when IT issues arise.

- Review trends regularly: Schedule weekly reviews of performance trends to catch gradual degradation before it becomes critical.

» Here’s why you need network monitoring software

10. Maintenance and statistics

This means regularly updating the database’s internal statistics about data distribution and cleaning up fragmented or unused space. It focuses on optimizer statistics, storage engine, and indexing subsystem to impact cardinality estimates, plan choice, and physical storage efficiency.

The goal is to improve optimizer decisions and reduce storage bloat.

Here’s how to implement this strategy:

- Automate statistics updates: Configure automatic statistics collection after bulk loads or significant data changes. Most databases have built-in auto-statistics features that you can enable, or you can create scheduled jobs or triggers that run ANALYZE/UPDATE STATISTICS commands after known data-change events like nightly ETL processes.

- Schedule vacuum and compaction: Set up regular table maintenance to reclaim space and reduce fragmentation during low-traffic windows. Use your database’s job scheduler to run VACUUM, VACUUM FULL, or equivalent compaction commands during off-peak hours.

- Rebuild fragmented indexes: Monitor index fragmentation levels and rebuild when they exceed acceptable thresholds. Most databases consider fragmentation acceptable below 10-15%, worth reorganizing between 15-30%, and requiring a full rebuild above 30%.

- Track plan stability: After maintenance operations, verify that query plans haven’t unexpectedly changed.

Turn database performance into a competitive advantage

Database performance isn’t just about raw speed. It’s about aligning your technical infrastructure with business outcomes, but optimization doesn’t stop at implementation. The key to sustained performance is integrating these improvements into your broader IT operations strategy, embedding performance baselines into your CI/CD pipelines, and shifting from reactive firefighting to preventive maintenance.

This is where unified IT management becomes essential. Traditional database-specific monitoring tools give you deep visibility into query performance and storage metrics, but they operate in isolation without seeing the infrastructure, network, and application layers that also impact database health. A platform like Atera bridges this gap by monitoring server resources, network performance, and service status across your entire IT infrastructure. When database slowdowns stem from memory constraints, network latency, or server resource contention, Atera’s unified approach helps you diagnose and resolve infrastructure-related issues faster than managing siloed monitoring tools.

» Learn more about how Atera can help you with a free trial

Related Articles

How to check if a disk is MBR or GPT in Windows

Choosing between MBR and GPT depends on your hardware and future storage needs, with GPT supporting larger drives and modern security features. You can check your disk’s partition style in seconds using Disk Management, Command Prompt, or PowerShell.

Read nowHow to enable or disable the Action Center in Windows 10 and 11

The Action Center centralizes system alerts and quick toggles, but it requires specific background services and shell integrations to function correctly. While hiding the interface stops visual distractions, notifications still process in the background, making proactive management necessary for security.

Read nowHow to change file associations in Windows 10 and 11

Windows file associations follow a priority system that decides which app opens your files, and problems usually happen when those links break or apps aren’t registered correctly. You can fix or change them using built-in tools, while automation platforms make large-scale management much easier.

Read nowHow to fix the “vcruntime140.dll not found” error in Windows 11

The "vcruntime140.dll" error usually happens because of missing or corrupt Visual C++ Redistributables. To fix it safely, verify your system architecture and reinstall the official Microsoft packages for both x86 and x64.

Read nowEndless IT possibilities

Boost your productivity with Atera’s intuitive, centralized all-in-one platform